The quantum resources needed to break modern encryption have dropped by an order of magnitude since May 2025. Here is what happened, what it means, and what organizations should do about it.

In fewer than twelve months, three research papers have sharply reduced the estimated quantum resources required to break the cryptographic systems that protect the global digital economy. Together, they represent the most significant shift in quantum threat assessment since Peter Shor published his factoring algorithm in 1994.

The punchline: what once required 20 million qubits now requires fewer than one million for RSA, potentially fewer than 100,000 under newer architectures, and fewer than 500,000 for the elliptic curve cryptography that protects every major cryptocurrency and most digital signatures. One of the papers was so sensitive that its authors chose not to publish the actual attack circuits, instead releasing a cryptographic proof that the circuits work without revealing how they work. If you are a CISO, CTO, or policymaker still treating quantum risk as a future problem, that decision should give you pause.

The Three Papers

Gidney (May 2025): RSA-2048 in Under a Million Qubits

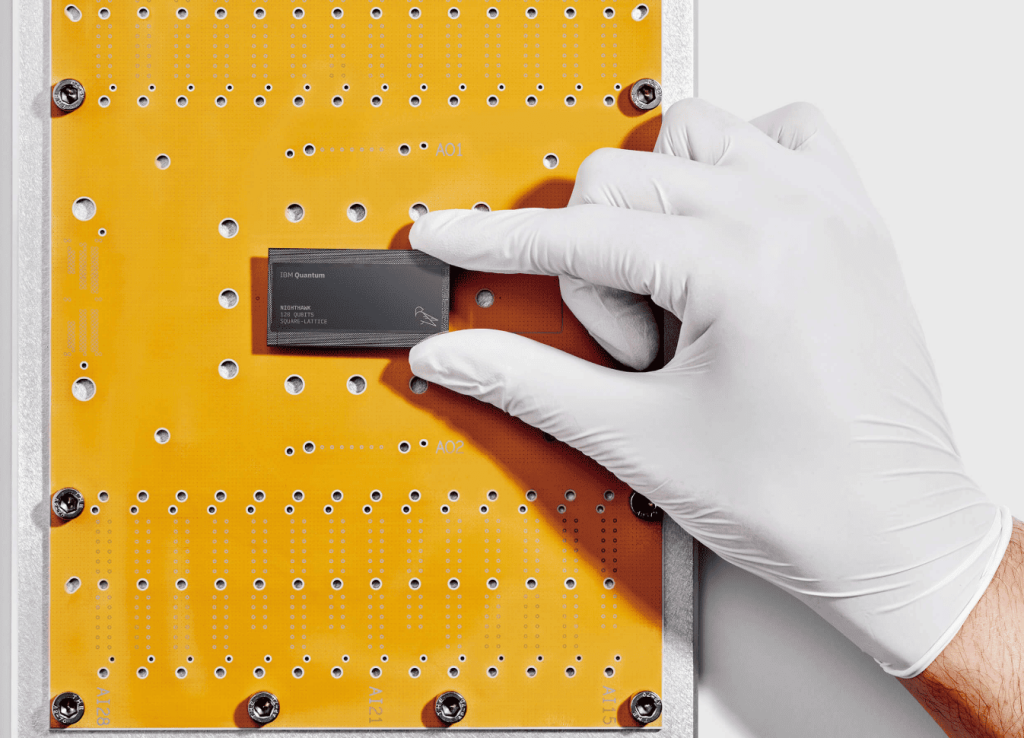

Craig Gidney of Google Quantum AI published a paper showing that a quantum computer with fewer than one million noisy physical qubits could factor a 2048-bit RSA integer, the encryption standard underpinning most internet banking, email, and digital certificates, in less than a week. His previous estimate from 2019 required 20 million qubits and about eight hours. The new number represents a 20x reduction in hardware requirements, achieved entirely through algorithmic and architectural improvements: approximate residue arithmetic, yoked surface codes for higher-density storage of idle qubits, and magic state cultivation for more efficient generation of the resource states needed for fault-tolerant gates.

The paper includes circuit layouts, detailed physical mockups, and an associated data repository on Zenodo. It assumes the same conservative hardware parameters used in 2019: a square grid of qubits with nearest-neighbor connectivity, a gate error rate of 0.1%, and a surface code cycle time of one microsecond. The improvement is purely in how efficiently the algorithm uses the machine, not in the machine itself.

Iceberg Quantum (February 2026): Under 100,000 Qubits with a New Architecture

Iceberg Quantum, a Sydney-based startup whose founders began the work as PhD students at the University of Sydney, unveiled its Pinnacle architecture alongside a $6 million seed round. Pinnacle uses quantum low-density parity-check (QLDPC) codes instead of the surface codes that have dominated most resource estimates. The result: RSA-2048 factoring could be achievable with fewer than 100,000 physical qubits, roughly another 10x reduction below Gidney’s estimate.

The Pinnacle architecture is modular and compatible with multiple hardware platforms. Iceberg is already working with PsiQuantum (photonics), Diraq (spin qubits), IonQ (trapped ions), and Oxford Ionics (trapped ions), several of which have publicly projected timelines to build systems at this scale within three to five years. Scott Aaronson hosted discussion of the paper on his blog, noting the importance of the result while being careful to distinguish between what the architecture demonstrates in simulation and what remains to be proven in hardware. Commenters on his blog highlighted the parallelization of the algorithm at the gate level as particularly promising.

Caveats matter here. QLDPC codes require qubit connectivity beyond the simple nearest-neighbor grids used in Gidney’s surface code estimates. The Pinnacle architecture requires interactions within processing blocks at a scale that, while not full all-to-all connectivity, goes well beyond what planar surface codes demand and has not been demonstrated at scale. The architecture is validated through numerical simulation, not experimental hardware. And as Gidney himself has noted, the decoder reaction time assumptions for QLDPC codes are harder to meet than for surface codes, an engineering challenge the paper acknowledges but does not fully resolve. The direction is clear, but the gap between simulation and fabrication remains substantial.

Google Quantum AI (March 2026): Breaking Cryptocurrency Encryption in Minutes

The third and most dramatic paper landed today. Google Quantum AI published a whitepaper with co-authors including Justin Drake of the Ethereum Foundation and Dan Boneh of Stanford, showing that the elliptic curve cryptography protecting Bitcoin, Ethereum, and virtually every major cryptocurrency could be broken with fewer than 500,000 physical qubits, in a runtime measured in minutes, not days. The previous best estimate, from Litinski in 2023, required roughly 9 million physical qubits on a photonic architecture, making this approximately a 20-fold reduction.

The paper presents two optimized quantum circuits for solving the 256-bit Elliptic Curve Discrete Logarithm Problem (ECDLP-256). ECC requires roughly 100x fewer Toffoli gates than RSA-2048 (70 to 90 million versus 6.5 billion), which is why the runtime collapses from a week to minutes. Shor’s algorithm can be “primed,” meaning the first half of the computation, which depends only on fixed curve parameters, can be precomputed. Once a specific public key is revealed, the remaining computation takes approximately nine minutes. Bitcoin’s average block time is ten minutes. Under idealized conditions, Google estimates a roughly 41% probability that a primed quantum computer could derive a private key before a Bitcoin transaction is confirmed.

In an unprecedented move for quantum cryptanalysis, Google chose not to publish the actual circuits. Instead, the team released a zero-knowledge proof, built using SP1 zkVM and Groth16 SNARK, that allows anyone to mathematically verify the resource estimates without gaining access to the attack details. The paper was accompanied by a responsible disclosure blog post confirming that Google engaged with the U.S. government prior to publication and calling on other quantum research teams to adopt similar practices.

The irony is not lost on the cryptography community: the zero-knowledge proof itself relies on pairing-friendly elliptic curves (BLS12-381) that, while facing a different quantum threat profile than secp256k1, would ultimately be vulnerable to a sufficiently powerful quantum computer running analogous discrete logarithm attacks. The proof is sound only because such machines do not yet exist.

The reaction on the day of publication was immediate and broad. Bloomberg ran the story within hours. CoinDesk, The Block, Bitcoin Magazine, SecurityWeek, and BeInCrypto all published detailed coverage. Binance founder CZ posted on X urging calm while acknowledging that the migration challenge is real. Ethereum researcher Justin Drake, a co-author on the paper, called it “a monumentous day for quantum computing and cryptography.” Dragonfly Capital’s Haseeb Qureshi described the findings as “serious” and warned that all blockchains need transition plans now. Nic Carter of Castle Island Ventures called the paper “very sobering,” adding that it may not even be the most concerning quantum paper released that day, a reference to a separate Caltech/Oratomic preprint that pushed qubit estimates even lower using neutral-atom architectures. Starknet founder Eli Ben-Sasson called on the Bitcoin community to accelerate work on BIP 360 and other quantum-resistant upgrades. The breadth of the response, from financial media to protocol developers to venture capital, reflects just how far this conversation has moved from academic niche to industry priority.

The Research That Made It Possible

None of these three papers appeared in isolation. They sit at the end of a chain of algorithmic innovation that accelerated from 2023 onward. What follows is not exhaustive, but it captures the key links.

In August 2023, Oded Regev of NYU published the first fundamental improvement to Shor’s factoring algorithm in nearly 30 years, proposing a multidimensional variant that uses far fewer quantum gates per circuit run at the cost of more qubits. MIT’s Seyoon Ragavan and Vinod Vaikuntanathan then resolved both of Regev’s main bottlenecks at CRYPTO 2024: they cut the qubit overhead using Fibonacci-number exponentiation and made the algorithm tolerant to quantum noise by using lattice reduction to filter out corrupt results. In parallel, Chevignard, Fouque, and Schrottenloher at Univ Rennes/Inria/CNRS/IRISA showed how to perform approximate modular arithmetic with far fewer logical qubits, reducing the requirement toward roughly 1,730 logical qubits for RSA-2048, though at the cost of trillions of Toffoli gates. Gidney’s 2025 paper synthesized their approach with two further Google innovations: “magic state cultivation” (Gidney, Shutty & Jones, 2024), which makes producing high-fidelity quantum resource states nearly as cheap as ordinary gates, and “yoked surface codes” (Gidney, Newman, Brooks & Jones, 2025), which triples the storage density of idle qubits. On the hardware side, Google’s Willow chip demonstrated quantum error correction below the surface code threshold in December 2024, the first experimental proof that the noise assumptions underpinning all of these resource estimates are physically achievable.

Other work contributed to the broader picture, including advances in decoding algorithms, lattice surgery techniques, and quantum compilation methods from groups across academia and industry. The point is not that any single paper cracked the problem, but that a community of researchers, building on each other’s work at increasing pace, drove the resource estimates down far faster than hardware-only roadmaps anticipated.

The Trajectory

What makes these three papers collectively significant is not any single number. It is the trajectory. Consider how the estimated physical qubits needed to break RSA-2048 have evolved:

- 2012 (Fowler et al.): hundreds of millions to ~1 billion

- 2019 (Gidney & Ekerå): ~20 million

- 2025 (Gidney): fewer than 1 million

- 2026 (Iceberg Quantum): fewer than 100,000

Each step represents roughly a 10x to 20x reduction, driven not by hardware improvements but by better algorithms, better error correction codes, and better compilation. Whether this pace continues is not guaranteed. Algorithmic optimization has limits, and each paper’s authors are careful to note where they see diminishing returns under their current assumptions. But the trend over the past decade has consistently moved in one direction, and each time experts predicted the floor had been reached, someone found a new approach.

Hardware is also advancing. Google’s Willow chip demonstrated quantum error correction below the surface code threshold in late 2024. Quantinuum’s Helios processor has achieved 48 logical qubits from 98 physical qubits, an impressive encoding ratio, though these logical qubits are not yet at the fidelity or scale required for cryptographic attacks. IBM, IonQ, and others have published multi-year roadmaps targeting systems of hundreds of thousands of qubits by the late 2020s and early 2030s.

An important caveat: each reduction in qubit count shifts the difficulty to harder engineering problems. Sustaining fault-tolerant computation across hundreds of thousands of qubits for minutes or days, with real-time decoding of terabytes of measurement data, remains an unsolved systems engineering challenge at scale. Gidney himself has noted that under his current model, he does not see another 10x reduction without changing assumptions. Iceberg Quantum changed the assumptions by moving to QLDPC codes, but that introduces its own set of unsolved engineering problems around connectivity, decoding latency, and fabrication.

The Policy and Regulatory Landscape

These technical breakthroughs are landing on an increasingly active policy landscape.

NIST finalized its first three post-quantum cryptography standards in August 2024: ML-KEM, ML-DSA, and SLH-DSA. A fifth algorithm, HQC, was selected in March 2025 as a code-based backup to the lattice-based primary standards. NIST’s deprecation timeline (IR 8547) calls for quantum-vulnerable algorithms to be deprecated after 2030 and disallowed after 2035, with the most widely used schemes like RSA-2048 and ECDSA with P-256 explicitly in scope.

In the United States, the Quantum Computing Cybersecurity Preparedness Act requires federal agencies to inventory vulnerable systems and report migration progress annually. NSA’s CNSA 2.0 framework mandates that all new national security systems be quantum-safe by January 2027. Trump’s June 2025 executive order explicitly references Biden’s NSM-10 as the foundational document for PQC transition, a rare piece of bipartisan continuity in cybersecurity policy.

In Europe, an 18-nation joint statement called for high-risk use cases to complete PQC migration by 2030, with broad adoption by 2035. The EU Cyber Resilience Act is evolving toward a “Quantum-Safe-by-Design” framework.

2026 has been designated the “Year of Quantum Security,” a global initiative backed by the FBI, NIST, and CISA. The Quantum Insider’s parent company, Resonance Alliance, is driving the Year of Quantum Security 2026 (YQS2026) as a separate industry initiative, working with partners across the enterprise, government, and standards communities to accelerate awareness and migration readiness.

Google has set a 2029 internal deadline for its own post-quantum cryptography migration, a signal that carries particular weight given that Google’s own researchers are producing the resource estimates that define the threat.

What This Means for Organizations

The “harvest now, decrypt later” threat is no longer hypothetical. State actors and sophisticated adversaries are already collecting encrypted data with the expectation of decrypting it when quantum computers arrive. Any data that must remain confidential into the 2030s is at risk today.

For most organizations, the immediate action items are clear. Conduct a cryptographic inventory. Identify systems using RSA, ECC, and Diffie-Hellman. Prioritize data and systems with long confidentiality horizons. Begin pilot implementations of NIST’s standardized PQC algorithms. Build crypto-agility into new system designs.

For the cryptocurrency ecosystem specifically, Google’s paper provides a stark checklist: stop reusing wallet addresses, avoid exposing public keys unnecessarily, support proposals like BIP-360 (Pay-to-Merkle-Root), implement private mempools, and begin the engineering work to migrate transaction signing to post-quantum schemes.

The window for orderly migration is open. It will not stay open indefinitely. These three papers make that case with more precision than anything published before them.

For organizations looking to understand where they stand and what to do next, the Year of Quantum Security 2026 initiative provides resources, frameworks, and community to support the transition.

For more coverage of these papers and the broader quantum security landscape, see TQI’s reporting on Gidney’s RSA-2048 estimate, Iceberg Quantum’s Pinnacle architecture, and Google’s ECDLP-256 whitepaper.