Insider Brief

- Quantum machine learning (QML) explores whether quantum computers can improve specific machine learning tasks beyond classical capabilities.

- Current QML systems remain experimental, with most practical AI workloads still handled more effectively by classical hardware and models.

- The strongest near-term QML applications are expected in chemistry, materials science, optimization, and high-dimensional data analysis.

Machine learning already drives decisions across finance, drug discovery, logistics, and manufacturing. But the datasets and problems involved are growing in ways that strain classical hardware. Quantum Machine Learning (QML) asks whether quantum computers, with their ability to operate in exponentially large state spaces, could handle certain machine learning tasks more efficiently than classical systems.

For most practical problems today, Quantum Machine Learning (QML) does not outperform classical methods. The foundations are being built, and early results in specific domains are beginning to show what may be possible.

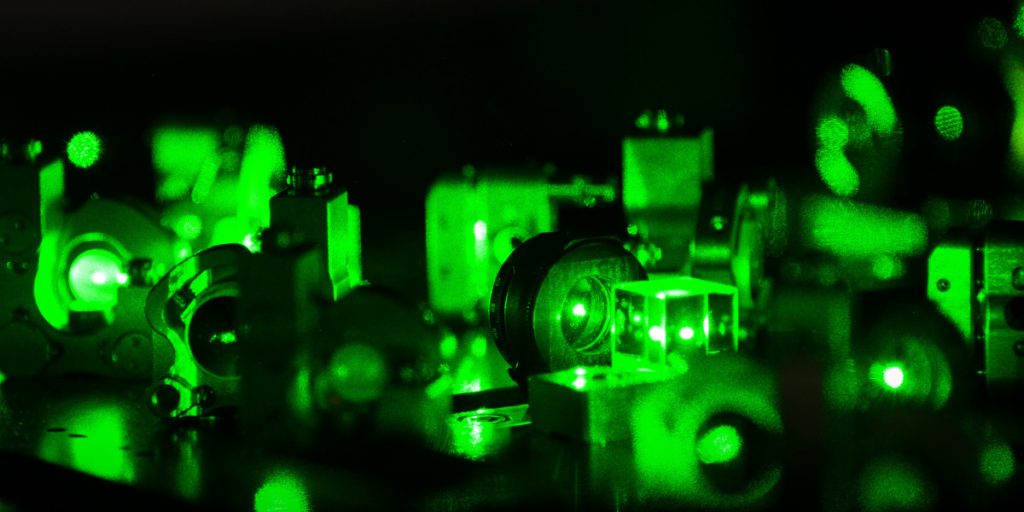

Quantum Machine Learning (QML) involves encoding data into quantum states, processing those states through quantum circuits, and extracting classical outputs from quantum measurements. The potential advantage comes from properties specific to quantum mechanics – superposition allows exploration of many possibilities simultaneously, entanglement creates correlations between qubits that have no classical equivalent, and interference amplifies useful solutions while suppressing less useful ones.

How Quantum Machine Learning Works

Three technical concepts underpin most QML approaches: quantum feature maps, variational quantum circuits, and quantum kernels.

Quantum Feature Maps

A feature map starts from an initial set of measured data and builds derived values intended to be informative and non-redundant, facilitating learning and generalization. In classical machine learning this means mapping raw inputs – pixel intensities for images, word frequencies for text – into a representation a model can work with. Quantum feature maps extend this by encoding classical data into quantum states, placing it inside a feature space that expands exponentially with each additional qubit. Each qubit doubles the size of the space available, meaning even a modest number of qubits can represent states in spaces far too large for classical systems to handle efficiently. This may allow quantum systems to capture complex relationships in data that would be computationally expensive to represent classically.

Variational Quantum Circuits

PennyLane’s documentation describes variational circuits as quantum algorithms that depend on tunable parameters, and can therefore be optimized. In practice, many Quantum Machine Learning algorithms use this approach – a parameterized quantum circuit is constructed, data is encoded into it, quantum operations are applied, and a classical optimizer adjusts the circuit’s parameters to minimize a cost function – similar to how weights are trained in a neural network. As Xanadu’s research team explains in the PennyLane framework paper, PennyLane’s core feature is the ability to compute gradients of variational quantum circuits in a way compatible with classical techniques such as backpropagation, extending standard automatic differentiation to include quantum and hybrid computations. This hybrid approach makes it possible to run Quantum Machine Learning on current noisy quantum hardware, where fully quantum training is not yet practical.

Quantum Kernels

In classical machine learning, kernel functions map data into higher-dimensional spaces where classification becomes easier. A dataset that cannot be separated by a simple line in two dimensions might become separable in a much higher-dimensional space. Support Vector Machines (SVMs) use this technique extensively.

Quantum kernels extend the same idea using quantum circuits. As Abby Mitchell, then a developer advocate on IBM’s quantum team, explained, when classical data is too complex for a simple linear boundary, developers use kernel functions to map it into higher-dimensional feature spaces. But classical kernels can result in poor performance and exponential increases in compute time as data complexity grows. Quantum computers take this further by encoding data into quantum circuits that access feature spaces far larger than what classical kernels can efficiently reach. Each additional qubit doubles the size of the space the system can represent, which means even a modest number of qubits can map to dimensions that no classical representation could practically store.

In practice, the workflow involves encoding data into quantum circuits, using a sampler primitive to obtain quasi-probabilities, forming a kernel matrix from those probabilities, and feeding that matrix into a classical SVM to predict labels. Mitchell’s walkthrough uses Qiskit Runtime to demonstrate this pipeline. In 2021, IBM researchers published a proof that quantum kernels can offer an exponential speedup for certain classification problems – one of the stronger theoretical results supporting near-term QML.

Quantum kernels remain highly experimental but represent one of the more theoretically grounded paths to near-term quantum advantage in machine learning.

Key Quantum Machine Learning Algorithms

Variational Quantum Eigensolver (VQE)

VQE estimates the ground-state energy of a quantum system – a calculation central to molecular simulation and materials science. It uses a variational circuit to prepare quantum states and a classical optimizer to minimize energy. VQE works on current noisy hardware because the circuits are relatively shallow, meaning fewer operations and less noise accumulation. Applications include simulating battery materials, enzyme reactions, and nitrogen fixation chemistry.

Quantum Approximate Optimization Algorithm (QAOA)

QAOA targets combinatorial optimization problems – finding the best solution among many possibilities. It prepares a superposition of candidate solutions, applies problem-specific operations, and uses classical optimization to increase the probability of measuring good solutions. QAOA has been explored for portfolio optimization in finance, supply chain logistics, and resource allocation.

Quantum Support Vector Machines (QSVM)

QSVM extends classical SVMs by computing kernels using quantum circuits. The quantum kernel maps data points into quantum states, applies controlled operations, and measures overlap. QSVM has shown promise in classification tasks involving high-dimensional data, though practical advantage over classical SVMs has not yet been demonstrated at commercially relevant scale.

Quantum Neural Networks (QNNs)

QNNs are quantum analogs of classical neural networks – layers of quantum gates with trainable parameters. Unlike classical networks trained via backpropagation, QNNs estimate gradients through quantum measurements using techniques such as the parameter-shift rule, a process that is noisy on current hardware.

Quantum Boltzmann Machines (QBM)

QBMs adapt classical Boltzmann Machines to the quantum domain. Classical Boltzmann Machines are probabilistic models used for unsupervised learning. QBMs use quantum tunneling and superposition to sample from probability distributions more efficiently. They remain largely theoretical but could potentially provide advantages for generative tasks if hardware matures sufficiently.

Emerging Approaches: Quantum Transfer Learning and Generative Models

Quantum transfer learning adapts pre-trained quantum circuits to new tasks with minimal retraining, borrowing from the success of classical transfer learning in reducing training time and data requirements. In February 2026, Lockheed Martin and Xanadu announced a joint research initiative focused on quantum generative models – exploring how quantum-native Fourier-based operations could capture data structure in ways classical methods cannot, with potential applications in defense, finance, and pharmaceuticals.

Quantum Machine Learning vs. Classical Machine Learning

As AI Insider has covered, classical machine learning systems are built to recognize patterns, make predictions, and classify information based on training data. They run on highly optimized GPU and TPU hardware with mature software frameworks. In most practical scenarios today, QML does not outperform these systems. Quantum approaches still face noise, limited qubit counts, and training challenges that classical systems have largely resolved.

| Aspect | Classical ML | Quantum ML | Where Quantum May Win |

| Data encoding | Explicit feature vectors | Quantum state superposition | High-dimensional, complex patterns |

| Processing | Deterministic/stochastic | Quantum interference, entanglement | Exponential search spaces |

| Scalability | Proven at billions of parameters | Limited by current qubit counts | Eventually, but not yet |

| Noise tolerance | Robust | Sensitive to decoherence | Improving through hybrid approaches |

| Training | Well-established | Barren plateaus, gradient issues | Active research area |

| Real-world deployment | Production-ready | Experimental | Specific domains (chemistry, optimization) |

The domains where near-term quantum advantage in ML is most plausible include molecular and materials simulation (where quantum computers naturally represent quantum systems), combinatorial optimization (where QAOA and quantum annealing target exponential search spaces), and high-dimensional classification (where quantum kernels may eventually outperform classical kernels once hardware improves).

Companies Working on Quantum Machine Learning

The following is a non-exhaustive selection. The landscape is broad and evolving rapidly, and inclusion or omission should not be interpreted as a ranking or endorsement.

Google Quantum AI has published influential research on quantum feature maps, quantum kernels, and variational algorithms. Cirq, Google’s open-source framework for quantum circuits, is widely used in QML research.

IBM Quantum provides Qiskit with machine learning extensions (Qiskit ML) and makes quantum processors available via the cloud through IBM Quantum Experience.

Xanadu developed PennyLane, an open-source machine learning library for quantum computers that integrates with TensorFlow and PyTorch. Xanadu focuses on photonic quantum computing and QML applications.

Zapata Quantum, which re-emerged in 2025 after restructuring, specializes in hybrid quantum-classical workflows through its Orquestra platform, targeting drug discovery and materials science applications.

Multiverse Computing applies QML to financial optimization, with research targeting portfolio optimization, risk assessment, and trading strategy optimization.

D-Wave Systems specializes in quantum annealing optimized for combinatorial optimization, with systems used by enterprises including NASA and Volkswagen.

Menten AI develops a software platform for protein design powered by machine learning and quantum computing, targeting drug discovery and peptide therapeutics.

Real-World Quantum Machine Learning Applications

Drug Discovery and Molecular Simulation

Simulating molecular properties is the natural fit for quantum computers because molecules are fundamentally quantum systems. Roche has continued QML experiments for drug discovery through partnerships with Quantinuum. Pharmaceutical companies including Boehringer Ingelheim and Merck are exploring quantum-accelerated drug discovery with dedicated QML teams.

In early 2025, IonQ and Ansys announced a milestone – a hybrid quantum-classical algorithm, when simulated, delivered up to 12% faster processing than classical methods on a blood pump dynamics simulation. IonQ Forte hardware was used separately to validate the quantum algorithm on smaller-scale instances. The result is an early indicator of how quantum approaches could accelerate engineering simulation, though the full-scale speedup was achieved via simulation rather than direct quantum hardware execution.

Financial Optimization

Finance is a major target because portfolio optimization involves exploring exponentially large solution spaces. Banks including JPMorgan Chase and Barclays have published research on quantum algorithms for financial problems. Risk assessment, fraud detection, and trading strategy optimization are active QML research areas.

Materials Science

Discovering new materials for batteries, semiconductors, and catalysts is computationally expensive on classical hardware. Mercedes-Benz and PsiQuantum have co-authored research on quantum simulation for EV battery electrolyte molecules. BMW and Quantinuum have collaborated on simulating chemical reactions in fuel cells.

Manufacturing and Quality Control

BMW and Pasqal have collaborated on using quantum algorithms for metal forming process simulation and crash testing, applying differential equation solvers to manufacturing optimization. Quantum-inspired tensor network methods have separately shown promise in defect detection tasks, though this work uses classical hardware with quantum-inspired mathematical techniques rather than quantum processors directly.

For a more detailed breakdown of quantum computing use cases across industries, see TQI’s full analysis – 8 Quantum Computing Use Cases: Real Applications Across Industries.

Challenges and Limitations

Noise and Error Rates

Current quantum processors are noisy. Environmental interference causes quantum states to lose information (decoherence), and imperfect gates introduce errors that accumulate as circuits grow deeper. Quantum machine learning (QML) algorithms must be designed to tolerate this noise, which remains an active research challenge.

Barren Plateaus

Training variational quantum circuits faces a fundamental problem – the loss landscape can become exponentially flat across large regions of parameter space, making gradient-based training nearly impossible. Randomly initialized quantum circuits produce nearly uniform output distributions, making it difficult for optimization to find a direction of improvement. Researchers are studying problem-specific circuit designs and structured initialization strategies, but barren plateaus remain one of the primary open challenges limiting practical QML.

Limited Scale

Current processors remain limited in qubit count and quality. Meaningful QML advantage is likely to require significantly more capable hardware than what exists today. Coherence time , limits circuit depth, constraining the complexity of quantum circuits that can be reliably executed

Interpretability

Classical neural networks are already difficult to interpret. Quantum models are even more opaque, with quantum states existing in exponentially large spaces. This limits QML deployment in domains where model transparency is required, such as healthcare and finance.

What Comes Next

The field is moving from foundational research toward domain-specific demonstrations. Google’s Willow chip demonstrated below-threshold error correction in December 2024. Quantinuum demonstrated error-protected logical qubits beyond break-even in March 2026. These hardware milestones are expected to expand what QML algorithms can practically run. Timelines remain uncertain – what can be said with more confidence is the direction rather than the date.

On where QML is heading, TQI’s deep dive into what quantum AI actually means puts it well i-e quantum computing will not replace classical AI systems but may serve as a specialized co-processor for narrow tasks where quantum algorithms offer genuine advantages. AI already plays a role in calibrating quantum systems, mitigating errors, and optimizing circuits – while quantum computing offers potential speedups for specific AI bottlenecks like optimization and sampling. Each is making the other more capable.

One can put it this way – classical ML is a generalist, it handles pattern recognition, language, images, and predictions at scale, and it does all of this reliably on hardware that already exists in every data center on the planet. QML is a specialist being trained for a narrow set of problems it may eventually handle better than anyone else. Nobody hires a specialist to replace a generalist. They hire one because a specific job requires it.

The most likely near-term applications are in drug discovery, materials science, and financial optimization – areas where the underlying problem is either quantum mechanical in nature or involves search spaces that scale in ways classical hardware struggles with. Everything else stays classical, probably for a long time.

Frequently Asked Questions

Is quantum machine learning ready for production use?

Not yet in most domains. Current implementations are experimental or demonstrate advantage only in narrow scenarios. Hybrid quantum-classical approaches show promise in drug discovery and optimization. Full production deployment requiring fault-tolerant, error-corrected quantum computers is likely 5-10 years away. However, early adopters in chemistry and finance are already experimenting.

What’s the difference between quantum machine learning and quantum algorithms?

Quantum algorithms are general computational methods using quantum properties (Shor’s, Grover’s algorithms). Quantum machine learning is a specific domain of quantum algorithms focused on machine learning tasks—classification, regression, optimization, pattern discovery. All QML is based on quantum algorithms, but not all quantum algorithms are machine learning.

Will quantum computers replace classical deep learning?

Unlikely for most applications. Classical deep learning works exceptionally well for many tasks (NLP, computer vision, recommendations). Quantum ML will likely excel in specific domains—quantum simulations, optimization, high-dimensional pattern matching. The most probable future is specialized tools: quantum for some tasks, classical for others, integrated into unified systems.

What’s the quantum advantage in machine learning?

Theoretical quantum advantage comes from superposition and entanglement allowing quantum computers to explore exponentially large spaces. Practical advantage requires quantum computers that outperform classical computers on useful tasks—not yet achieved broadly. Near-term advantage is expected in domain-specific problems: molecular simulation, optimization, high-dimensional classification, where quantum properties naturally apply.

Want to explore more? Check out TQI’s guide to 8 real quantum computing use cases across industries, or explore the full evolution of quantum computing technology covering key milestones and current engineering challenges.