Written by Elias Lehman

In Partnership with Quantum Computing at Berkeley

The noisy intermediate scale quantum (NISQ) era is a term used to describe the current state of quantum computing, in which quantum computers can perform certain tasks that are beyond the capabilities of classical computers but are not yet able to perform a wide array of quantum algorithms with high precision and low error rates. Leaving the NISQ era will be marked with milestones in the form of research supporting quantum supremacy. Claims of this tend to make headlines so it’s no surprise that Google’s 2019 publication, one of the first papers claiming a quantum computer could solve a classically intractable problem, was met with skepticism.

The NISQ era is characterized by the fact that quantum computers have a limited number of qubits and a high error rate. As a result, NISQ quantum computers are not yet able to perform certain tasks, such as factoring large numbers or simulating quantum systems with high accuracy, that are believed to be within the capabilities of fully-fledged quantum computers. The NISQ era is considered to be a transitional stage in the development of

quantum computing, as researchers and engineers continue to work on developing larger, more powerful quantum computers that can perform more complex tasks with greater accuracy. It is hoped that the NISQ era will eventually give way to a more applicable quantum computing era, in which quantum computers will be able to perform a wide range of tasks with high precision and low error rates.

Despite these limitations, NISQ quantum computers are already being used for a variety of applications, including machine learning, optimization, and chemistry. These applications are possible because NISQ quantum computers can perform certain tasks that are difficult or impossible for classical computers to perform efficiently, such as finding the ground state of a quantum system or searching through a large dataset for specific patterns. Furthermore, there are numerous approaches to optimizing NISQ-era quantum computers such that they can achieve tasks on the frontier of today’s technology. These optimization techniques are in the form of addressing and relinquishing the high errors that are native to quantum computing.

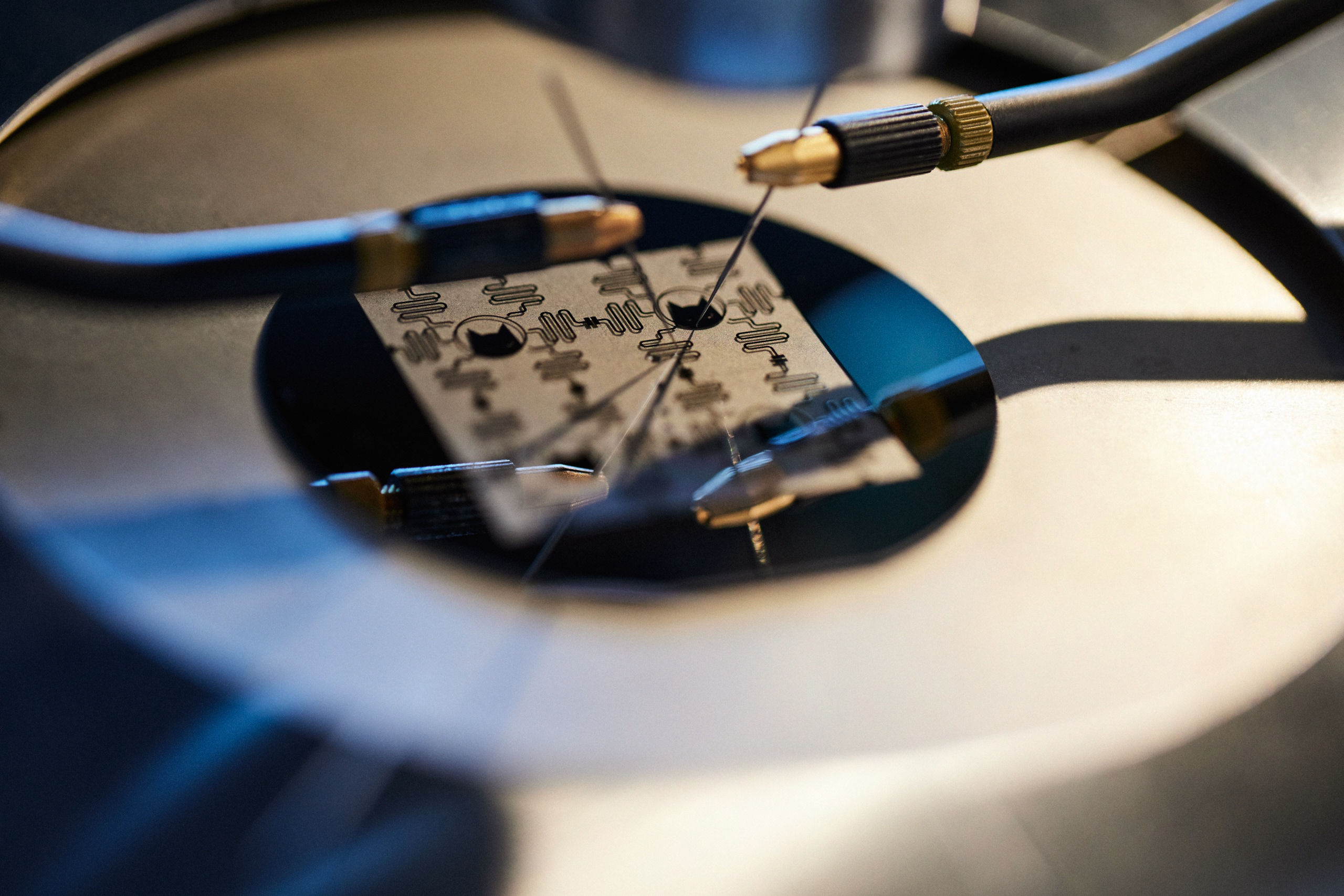

Image courtesy of Alice & Bob

SECTION 2

Error mitigation involves post-processing the results of a computation to reduce the impact of errors. This is typically done by applying statistical techniques to the results of the computation, such as extrapolation or bootstrapping, to reduce the noise and errors present in the data. Extrapolation involves fitting a model to the data and using it to estimate the true value of a quantity. Bootstrapping involves resampling the data and using the resampled data to estimate the uncertainty in the measurement.

One modern extrapolation approach, called zero-noise extrapolation (ZNE), involves the purposeful application of noise to a quantum circuit. A researcher may add excessive noise, followed by a measurement of how a large amount of noise is affecting the circuit, and working in reverse, extrapolating away the small amount of noise that is naturally present. This is like taking the limit of the noise to an extreme, to see the patterns that it makes in the data, and expecting those patterns to be present on the minute level as well.

Another way to mitigate errors is to rely on the resilience of classical computers. Hybrid quantum-classical algorithms combine quantum and classical computations to perform tasks that are

beyond the capabilities of either approach alone. Hybrid algorithms can be used to perform EM by using quantum computation to generate data, and classical computation to process and correct the errors present in the data.

More advanced extrapolation utilizes machine learning to learn the noise and error patterns present in quantum computation and uses this information to correct or mitigate the errors. This approach often uses the hybrid quantum-classical design. As you can imagine, a NISQ computer cannot accurately process a machine learning model to learn its noise, so instead, we rely on classical computers.

SECTION 3

While EM is centralized around post-processing, quantum error correction (QEC) involves actively detecting and correcting errors as they occur during the computation. A common approach goes by the technical name “quantum subspace expansion”. This is achieved by using additional qubits, known as “ancilla” qubits, to perform error detection and correction operations. The ancilla qubits are used to encode the state of the computation redundantly, such that errors can be detected and corrected by applying appropriate operations to the ancilla qubits.

The surface code is a topological quantum error-correcting code that encodes quantum information in the state of a two-dimensional array of qubits. The qubits are arranged in a grid, with each qubit interacting with its neighbors through a series of controlled Z gates.

Additional methods include variational QEC and tensor networks. Do not be daunted by the jargon, these methods are more intuitive than they appear. For example, variational QEC uses a parametrized quantum circuit, a quantum circuit whose gates can be adjusted, to learn the error patterns present in the computation. Fluctuating parameters to reduce noise but maintain the state of the system is at the core of this technique.

Image courtesy of Alice & Bob

The surface code can detect and correct for a single quantum error that occurs within a certain region of the grid, known as a “face” or a “plaquette.”

The color code encodes quantum information in the state of a three-dimensional array of qubits. The qubits are arranged in a lattice, with each qubit interacting with its neighbors through a series of controlled Z gates. The color code can detect and correct for multiple quantum errors that occur within a certain region of the lattice, known as a “cell.”

While the surface code and the color code require several qubits to achieve the redundancy necessary for error correction, bosonic codes try to reduce errors by leveraging the properties of a single quantum system. They are based on quantum harmonic oscillators with an infinite number of energy levels. By encoding information in a combination of these energy levels, redundancy is possible with a single physical system. The most common bosonic codes are:

– GKP code: named after its inventors Gottesman-Kitaev-Preskill, this code corrects for both bit-flips and phase-flips by using grid states of the oscillator.

– Cat code: named after Schrödinger’s cat, this code can exponentially suppress bit-flip errors at the expense of a linear increase in phase-flip errors, as the number of photons in the oscillator increases.

Bosonic codes alone cannot bring the error rates down to a useful level, so they are usually implemented in addition to error correction codes such as the surface code. But their natural robustness against errors greatly reduces the number of qubits required to reach useful logical error rates.

Both the surface code and the color code have been proposed as potential candidates for implementing fault-tolerant quantum computation, which would allow quantum computers to perform large-scale computations with high accuracy. However, both codes have their strengths and limitations, and further research is needed to determine their practicality for use in real-world quantum computing applications.

In summary, QEC and EM techniques have a wide range of applications in NISQ computing. Error mitigation can be used to improve the accuracy of measurements and simulations performed on NISQ computers, which are currently limited by noise and errors. Error correction, on the other hand, is essential for building large-scale, fault-tolerant quantum computers, which will be able to perform quantum computations with high reliability and accuracy. Despite recent progress, there are some significant barriers standing between today’s NISQ computers and tomorrow’s reliable quantum computers. For one, quantum errors can be difficult to detect. Errors are due to a variety of factors, such as noise in the quantum system or environmental interference. These errors often leave no trace except for their effect on the outcome of the computation.

QEC is resource-intensive because it typically requires a large number of qubits and gates to encode and decode the quantum information in a way that allows errors to be detected and corrected. Currently, one “logic” qubit must be composed of several qubits and their ancilla components plus many operations.This can be a challenge, as current quantum computers are limited in the number of qubits they can manipulate. With respect to the operations required to encode and decode qubits, they also have limited gate fidelity (i.e. the accuracy of the gate) and are prone to errors of their own. Most QEC and EM techniques require a high degree of control over the quantum system, as even small errors in the control can result in errors in the computation.

All of these techniques offer promising approaches for improving the accuracy and reliability of quantum computations. However, this is a rapidly developing field, and many of the approaches that have been proposed are still being researched and refined. As a result, it is difficult to predict which will ultimately prove to be the most effective for realizing fault-tolerant quantum computation.

[ivory-search id=”2367594″ title=”Custom Search Form”]

One of our team will be in touch to learn more about your requirements, and provide pricing and access options.

Necessary cookies are always on to ensure the website works. Optional cookies help us understand how the site is used. Privacy Policy