By Michael J. Biercuk and Alex Shih

The relevance of quantum computing will ultimately hinge on one thing: whether a customer achieves a positive ROI from a quantum solution over an alternative computing tool for their commercial problem.

Q-CTRL was recently thrilled to announce the first Practical Quantum Advantage in Materials Simulation—the first major step to positive ROI. We showed how our software could push today’s machines to the limit, ultimately delivering a solution 3,000 times faster than the best industry-standard classical alternative. Building on this first finding, we are confident the technical community will rapidly expand the applicability of these results to a broad range of high-value problems as machines scale up in capability and performance.

Many end-users and data center providers are excited to be early adopters, seeing a chance to accelerate the problems key to unlocking the future of AI, chemistry, and energy. They simply want machines they can deploy easily, interoperate with other existing systems and compute clusters, and use to solve the business-critical problems they or their customers have.

That’s not how the industry typically frames discussions of quantum computing: turning inwards, we tend to prioritize highlighting the R&D efforts in quantum error correction underway to address the central challenge of error in quantum hardware. Hardware errors act like a handbrake on the development of quantum computing, so out of our scientific interest or perhaps out of defensiveness, our community has focused its engagement on describing progress on technological features.

What’s the wrong way to sell a pen? To talk about its features and its capabilities.

What’s the right way? To understand and explain how the pen solves your client’s problems.

In our view, it’s time to embrace a customer-value-centric approach to selling the quantum pen. And based on the successes we’ve seen advancing the state-of-the-art, we know we can.

Starting with the stack

As amazing as it may seem, the entire dominant architectural framework used in designing and describing quantum computers is built around one thing: errors.

Errors are the biggest impediment to the broad adoption of quantum computers, a circumstance quite distinct from conventional computing. As our sector races to build machines and an ecosystem capable of delivering broad commercial advantage in the near term, dealing with error is without doubt the defining technical challenge for the community.

As far as we know, building large-scale quantum computers capable of tackling the broadest range of problems will require what’s referred to as “fault-tolerant” operation. Core to this is the notion of quantum error correction (QEC). In QEC, simply put, by spreading quantum information out over many redundant physical devices (a process known as encoding), it becomes possible to identify and fix hardware errors while preserving the underlying quantum information. The theory of fault-tolerance then tells us that if QEC is enacted in just the right way, it is possible to build arbitrarily large quantum computers.

There remains a lot of work to do in our community to make this a reality. The resource inefficiency of QEC makes it a net negative today, generally introducing more errors than it fixes, despite incredible scientific progress validating that it really can work. At Q-CTRL, we therefore often focus on how additional supporting concepts in quantum error suppression, error-robust compilers, and quantum firmware (packaged as performance-management software) can both augment the efficiency of QEC and deliver better machine performance before QEC arrives. It was error suppression on its own that delivered the first Practical Quantum Advantage, after all.

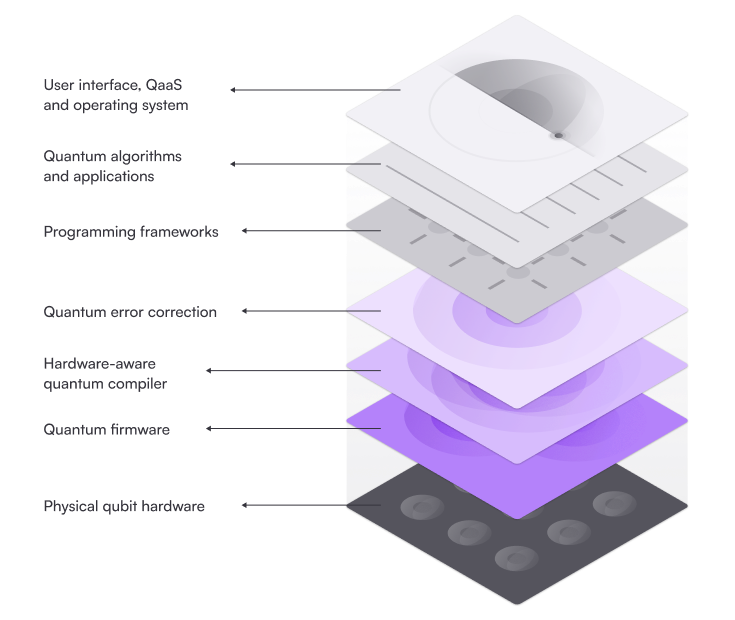

The quantum computing stack is a convenient device used to describe the various layers of hardware and software used to make stable, performant quantum computers. It’s organized around what’s required to achieve fault tolerance, as well as the translation between different effective programming languages (algorithms, quantum circuits, and gate-level machine language, for example). The stack helps vendors describe their own relevance in solving key sectoral problems, and helps investors and analysts survey and organize their view of the ecosystem.

All of that said, we have to ask ourselves a hard question: How can we communicate why any of this matters to customers beyond our narrow circle? Going further, should we talk about it at all?

A shift from technology to customer value

There’s no question that it’s essential to deal with errors in quantum computers—doing so in clever ways was central to our achievement of Practical Quantum Advantage. Nor is there debate that QEC and other supporting technologies will be critical long-term.

When the field was very new, and we primarily engaged with other quantum experts, it was sensible to put this internal quantum computer architecture front and center, and advocate that progress across the layers combined to enable fault-tolerant operation.

Things are now changing with the first Practical Quantum Advantage in hand.

Thanks to both hardware advances and user-friendly, error-reducing infrastructure software, we will be able to achieve a benefit in runtime speed with a small quantum computer before true fault tolerance arrives for narrow, but high-value problems. Accordingly, end users are eagerly embracing the opportunity to be early adopters and are making strategic decisions on future integration of quantum computing based on the results they’re seeing today.

Hiroshi Yamauchi, a Senior Manager at Softbank Corporation, recently said, “The performance [of IBM hardware augmented with Q-CTRL software] gave us confidence that in the coming years, quantum will play a crucial role in our commercial operations.”

This is a serious statement that strategic investments are being planned today based not on technical features, but rather the quality of outcomes achieved.

Softbank’s view is broadly representative of the customers we’ve engaged with; they want the hardware to deliver value for their needs rather than prioritizing exploration of the features used towards this end. Our performance management software matched this profile perfectly, enabling their team to focus on the applications that matter to their roadmap.

Goodbye Quantum Stack, Hello Deployable Solutions

QEC, fault tolerance, and the visually appealing quantum computing stack have dominated public narratives, but are really the ultimate insider’s topics.

Jim Barksdale, former CEO of Netscape, famously said, “There are only two ways to make money in business: bundling and unbundling.” That insight has played out repeatedly across technology cycles, and quantum computing is no exception.

We are now entering a phase where bundling matters again, where integrated, end-to-end solutions are required to deliver meaningful quantum adoption and commercial traction. End users want to be able to install, access, and use quantum computers in the settings that matter to them: on the cloud, in data centers, and in HPC facilities.

So from now on, we are making a strategic decision to prioritize speaking about deploying large-scale quantum computing(the desired end state) vs building fault-tolerant quantum computers (a technical means to that end). We’ll be retiring the quantum computing stack in most of our externally facing communications in favor of architectural diagrams that highlight how quantum computing can be a useful computational tool in a user’s workflows. We will always preserve deeply technical resources to engage our peers, but those are for insiders, not end users.

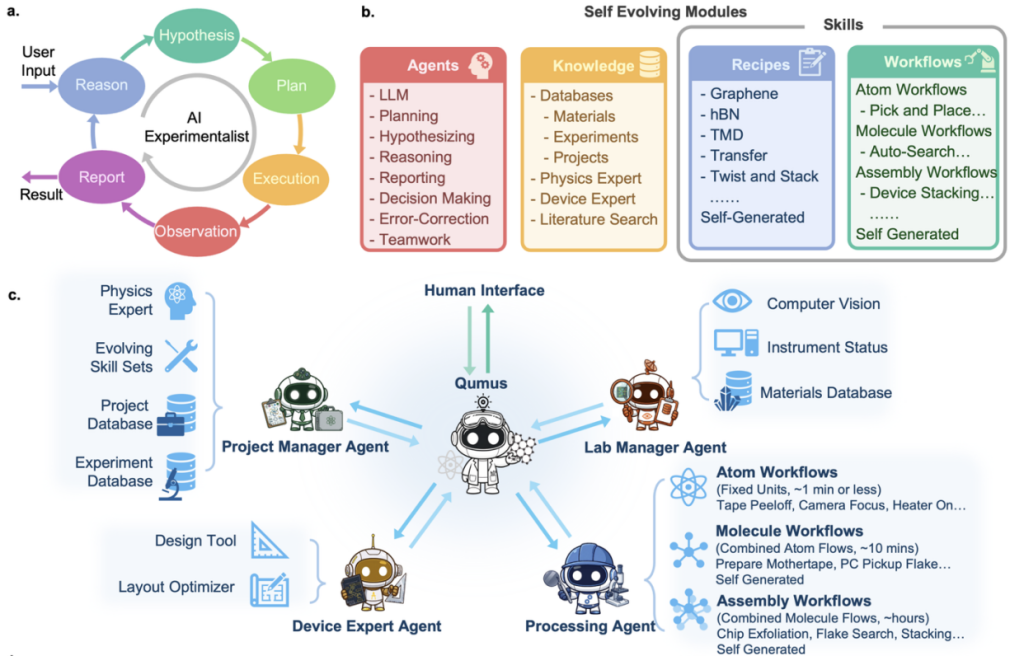

It’s been exciting to see our partners in the ecosystem going in a similar direction. IBM’s recently published reference architecture for a quantum-centric supercomputer is a great example. NVIDIA NVQLink provides low-latency interconnects between CPUs, GPUs, and QPUs to facilitate hybrid workflows. And IonQ’s recent Walking Cat Architecture provides another path to unlocking larger, more complex applications and workloads.

At Q-CTRL, we aren’t just talking—we’re making it happen.

In partnership with major players like NVIDIA, IBM, Quantware, Equal1, and Qblox, we’re powering a future in which fully abstracted quantum processors sit alongside CPUs and GPUs in software-defined quantum data centers. Workloads are parsed between them and executed invisibly by infrastructure software in a way familiar to today’s CIOs, CTOs, and engineers. Customer value is prioritized over exposing the quantum details.

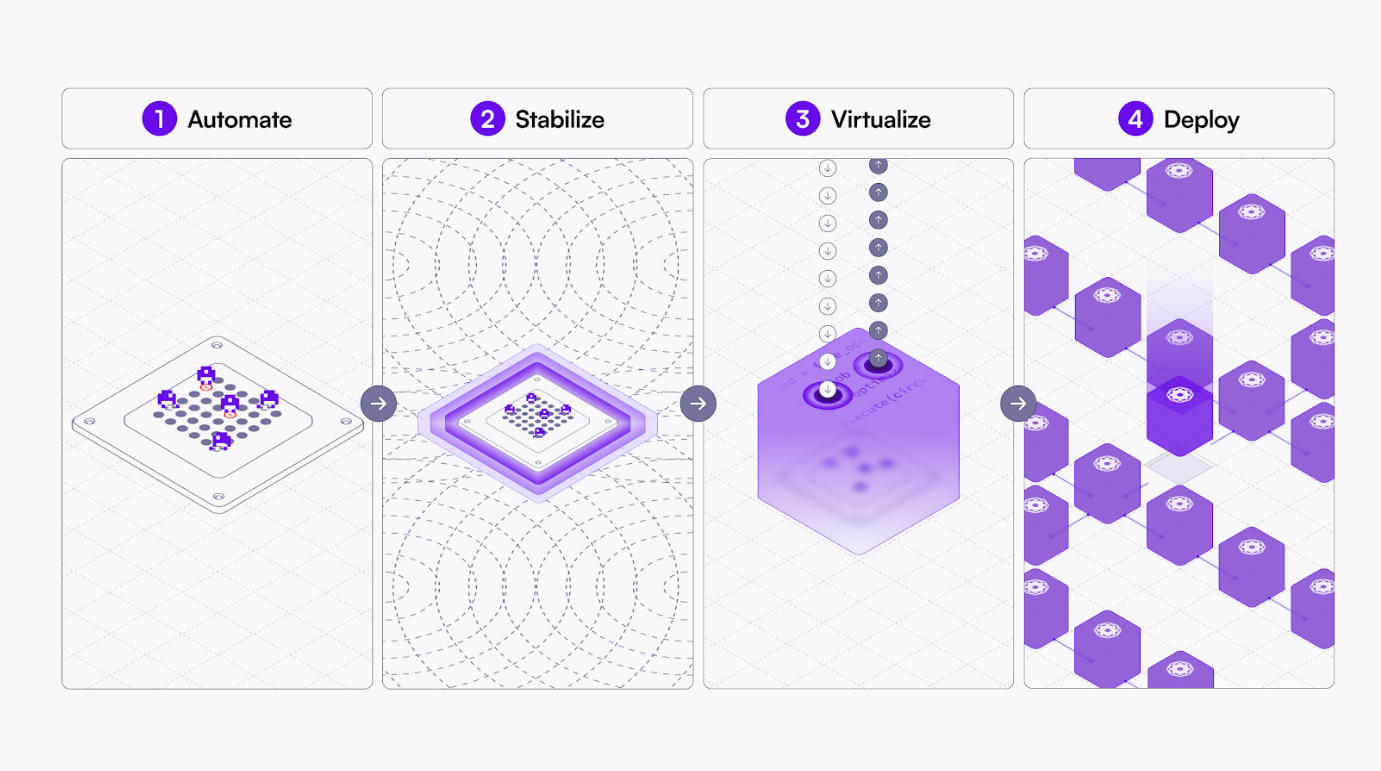

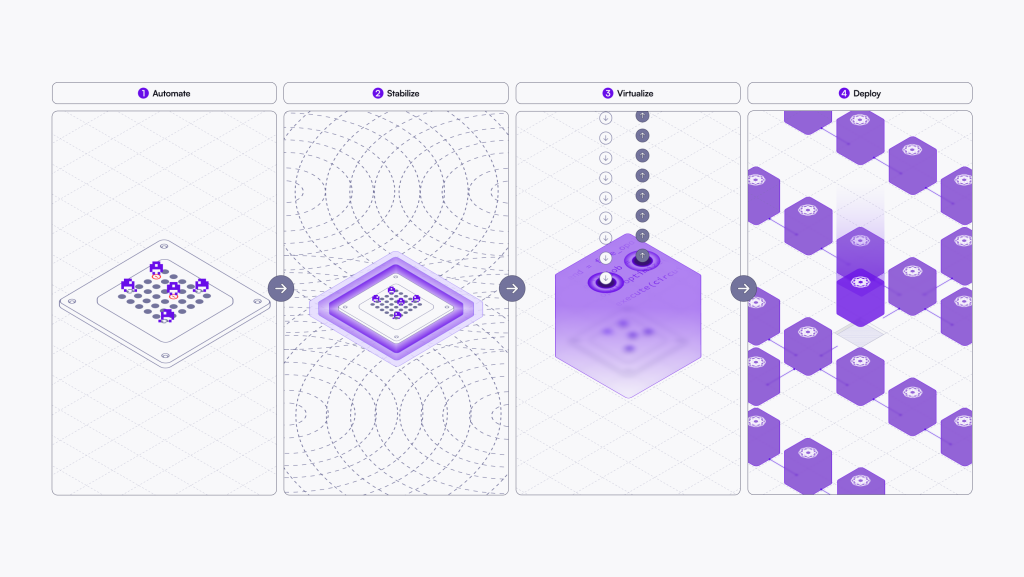

So how do we do it? With NVIDIA, we recently introduced “Quantum Containerization”, where our infrastructure software makes it possible to virtualize a GPU-accelerated quantum processor into a modular, deployable object. This removes one of the biggest challenges to large-scale quantum computing deployment—the fact that the hardware tends to be custom, and so are the interfaces to local GPUs, quantum controllers, and the like. With a Quantum Container, every QPU resource is packaged the same way for the end user and data center operator, abstracting away the details.

We even make it easy to build everything inside the quantum container. The recently announced commercially reproducible, fully validated Quantum Utility Block (QUB) architecture provides a GPU-accelerated QPU and controller, powered by a combination of advanced software technologies for autonomy, performance management, and virtualization. Boulder Opal provides an automated bootloader and maintenance capability, making the QUB function like any computer that turns on and stays on. Fire Opal provides the operating logic that optimizes, schedules, and executes any application run on it for peak performance.

Now it’s simple for data center operators to deploy quantum computing with the QUB, and beyond that, end users don’t even need to know—to them this is all abstracted inside a Quantum Container.

Their engagement starts with Black Opal, which provides the instruction manual and training to end users looking to use these new machines effectively. From there, they only need to run computational workloads like they always did, with virtualized QPUs giving massive boosts in capability.

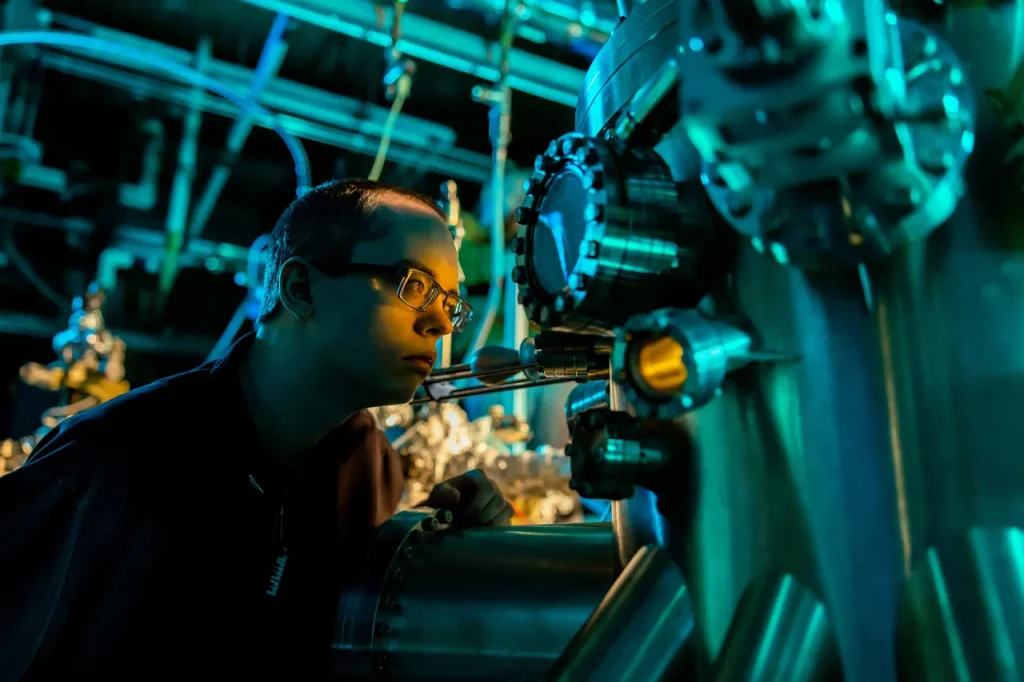

The first QUB is already live at Elevate Quantum at their Q-PAC facility! The system advanced from concept to full operation in just five months and at a fraction of the cost compared to closed, full-stack systems.

None of this diminishes the importance of various innovations across the quantum computing stack. In our view, our reprioritization correctly frames these as technological features for insiders, rather than concepts relevant to customers.

In the quantum era, hardware management is expected to depend increasingly on enterprise-grade, systems-level software as businesses seek greater efficiency, scalability, and return on investment.

DRAM refresh made computer memory cheap and useful by autonomously stabilizing imperfect and error-prone hardware using software. Outside of the memory industry, few think about it now because it’s so effective.

The vision of truly abstracted quantum systems “invisibly” delivering value to end users is only achievable if we similarly build on the right foundation—technology that actually works to autonomously stabilize hardware and address errors. No level of bundling or orchestration is relevant without the unique capabilities, validated performance, and demonstrated Practical Quantum Advantage delivered by Q-CTRL’s core error-reducing technologies. As in DRAM, performance enables abstraction.

We’ll continue advancing the state-of-the-art in AI-driven autonomy for hardware maintenance, error suppression, and QEC.

Fortunately for our customers, it’s becoming less and less important to know that any of this is even happening.