Insider Brief

- Quantum computing in 2026 sits between real technical progress and exaggerated public expectations, with neither extreme narrative fully accurate.

- Recent advances in error correction and reduced qubit requirements show measurable progress, but practical, large-scale applications remain limited.

- Most misconceptions come from misinterpreting research milestones, making careful reading essential to distinguish real progress from overstatement

The majority of the readers encountering quantum computing through headlines come away with one of two impressions. Either a machine somewhere is about to break every password on the internet, or the whole thing is overhyped nonsense that will never leave the lab. Both views are wrong, and the gap between them is where most public confusion about quantum computing lives.

Quantum is not something most people encounter in daily life. The research happens in dilution refrigerators colder than deep space, or in vacuum chambers holding a handful of trapped ions. That distance from everyday experience is part of why the technology attracts such extreme coverage. It is both genuinely promising, and genuinely nowhere close to doing what some press releases imply it already does.

This piece is about what the technology actually does in 2026, which parts of the common narrative hold up, and which do not.

Why Quantum Attracts So Much Attention

The core reason is a specific set of problems where quantum hardware could, in principle, do things classical computers cannot do efficiently. Molecular simulation is the cleanest example. Modeling how atoms and electrons interact in a new drug molecule scales badly on classical hardware because quantum mechanics is the actual physics involved. A quantum computer works in the same mathematical language, which is why physicist Richard Feynman proposed the idea in 1981 and why John Preskill of Caltech wrote in 2018 that quantum computers are “just beginning to reach the stage where [they] can provide useful solutions to hard quantum problems.”

That potential is why governments and investors are spending heavily. Security boulevard’s 2025 Quantum Outlook, cited in industry coverage, estimated more than $36 billion in public and private quantum investment, with over 70 startups working on software, compilers, and error correction alone. Riverlane’s 2025 year-end analysis notes multi-billion-dollar valuations for Quantinuum, PsiQuantum, SandboxAQ, and IQM alongside the publicly traded IonQ, Rigetti, and D-Wave. The United States, China, the EU, Germany, the UK, and Japan all treat quantum as a strategic technology.

This funding environment contributes to the hype cycle. With billions tied to future milestones, incremental results are often presented as major breakthroughs.

When Research Progress Is Overstated

The compression works in a consistent pattern – a lab publishes a paper showing a specific hardware platform running a specific benchmark under specific conditions. By the time the result reaches a general audience, the caveats are gone and the headline reads something like “quantum computer solves in seconds what would take supercomputers millennia.”

Google’s 2019 Sycamore experiment is the case study most quantum journalists return to. Google reported that its 53-qubit processor completed a sampling task in 200 seconds that it estimated would take the world’s fastest supercomputer 10,000 years. IBM disputed the classical estimate within weeks. By 2022, researchers at the Chinese Academy of Sciences showed the same task could be done in hours on GPUs.

The inverse happens too. Results that actually move the field forward often get ignored because they do not make for good headlines. Oxford Ionics reaching 99.99% two-qubit gate fidelity in 2025, a number that matters enormously for whether error correction will work at scale, got a fraction of the attention a flashier qubit-count record would have earned.

What Counts as a Myth, and What Does Not

Before addressing specific myths, it is worth separating three categories of claims that get thrown together in quantum coverage.

Some claims are straightforwardly false. Quantum computers are not going to break consumer banking apps tomorrow. Some claims are open questions where the honest answer is that nobody knows yet: “when a useful fault-tolerant quantum computer will arrive” is one of these. And some claims are framing errors, where a real capability is described in a way that implies more than it delivers.

Calling all three “myths” is part of what makes public discussion confused. One is wrong, one is genuinely unresolved and one is technically correct but misleading. They need different responses.

The Common Myths

Myth 1 – Quantum supremacy means quantum computers are ready for real-world use

Quantum supremacy, a term coined by John Preskill in 2012, refers to a quantum system performing a task that a classical computer cannot practically replicate. It does not imply practical usefulness.

As Mikhail Lukin noted following Google’s Sycamore experiment, the task used to demonstrate supremacy was not designed for real-world applications. It was a controlled benchmark.

The more relevant milestone is quantum advantage, where a quantum system solves a problem with practical value. That threshold has not yet been clearly demonstrated in a way that is broadly accepted across the field.

IBM has outlined a roadmap targeting quantum advantage by the end of 2026, but as of mid-2026, there is no widely confirmed example of quantum systems delivering consistent, real-world performance gains over classical alternatives.

Myth 2 – Quantum computers will replace classical systems

They will not, and no serious researcher claims they will. Quantum computers are specialized accelerators for a narrow set of problem types, mainly simulating quantum systems and specific algorithmic speedups like Shor’s algorithm for factoring and Grover’s algorithm for search. Everything else, from databases to AI training, will likely stay on classical hardware. The architecture people in the field describe is hybrid – classical HPC doing most of the work, with a quantum processor handling subroutines where it offers a real speedup.

Myth 3 – Practical quantum applications are just around the corner

This belongs in the “nobody knows when” category rather than the “false” category. The realistic milestone is fault-tolerant quantum computing, where error correction lets a machine run long, reliable computations.

IBM’s published roadmap schedules its Starling system for 2029, with 200 logical qubits and 100 million gates. In April 2026, the US Department of Energy announced a Grand Challenge targeting a first fault-tolerant quantum computer by 2028. Riverlane’s 2025 survey of over 300 quantum professionals also found 2028 emerging as an informal industry deadline for meaningful fault-tolerant integration. Credible people are indeed working toward specific dates, but nobody is promising anything.

Myth 4 – Quantum will instantly break modern encryption

This compresses several distinct questions into one scary statement. A cryptographically relevant quantum computer does not exist today, and when it arrives, it will need a very specific combination of logical qubits, error rates, and runtime that no current machine comes close to.

What has changed is the estimated resource cost. Earlier work by Craig Gidney and Martin Ekerå (2019) placed RSA-2048 factoring at roughly 20 million physical qubits. In February 2026, Iceberg Quantum unveiled its Pinnacle architecture, claiming under 100,000 physical qubits would be enough. A 2026 Caltech and Oratomic paper proposed a neutral atom architecture that could do it with 10,000 to 20,000 qubits.

Additional research from Google Quantum AI has further contributed to this trend. A 2026 paper examining elliptic curve cryptography estimated that attacks on commonly used curves such as secp256k1 could be carried out with fewer than 500,000 physical qubits under certain assumptions.

These are theoretical proposals, not deployed systems, but the numbers are moving in the wrong direction for anyone still using classical cryptography long-term.

Myth 5 – Quantum computing is only for physicists or big tech

Cloud quantum access changed this years ago. IBM Quantum, AWS Braket, Azure Quantum, and Google Quantum AI all provide public API access to real quantum hardware. Frameworks like Qiskit, Cirq, and PennyLane are open-source. A small team with a well-defined problem can run experiments on a 127-qubit processor without owning hardware. The hard part is building the expertise to use it meaningfully, and that barrier is coming down through university programs and industry training.

Myth 6 – There is no practical use for quantum computers yet

This is an overcorrection. Early-stage quantum systems have already demonstrated value in limited domains – Quantum chemistry simulations on current noisy intermediate-scale quantum (NISQ) devices have been used to study small molecular systems. Research from IBM has shown that quantum processors can model specific chemical interactions that are difficult to simulate classically at similar scales.

In parallel, quantum sensing, a related but distinct field, is already deployed. Technologies such as atomic magnetometers and NV-diamond sensors are used in applications ranging from medical imaging to navigation. Companies such as Qnami and SBQuantum are commercializing these systems.

What does not exist yet is a quantum application generating commercial revenue at a scale that would justify the hardware investment. Demonstrated scientific usefulness in narrow domains does not yet translate into broad economic impact.

Myth 7 – Quantum computing is only viable for governments and big business

Large-scale funding is concentrated in government programs and major technology firms, particularly on the hardware side. Building and operating quantum processors requires significant capital investment, specialized infrastructure, and long development timelines.

However, the broader ecosystem is more distributed. Startups focus on software, algorithms, and enabling technologies. University spinouts commercialize research outputs. Mid-sized companies develop subsystems such as cryogenic cooling, control electronics, and error correction tools.

Industry mapping efforts, including coverage by The Quantum Insider, show a layered ecosystem spanning hardware, software, sensing, and communications. The field remains capital-intensive at the infrastructure level, but participation is not limited to large institutions.

What Progress Actually Looks Like

Tracking the field means watching the metrics that matter instead of qubit counts. A February 2026 analysis published by Quantum in South Carolina put it directly: “If a breakthrough announcement highlights a new 1,000-qubit processor, the relevant question in 2026 is not the raw number, but how many logical qubits can be constructed and maintained below fault-tolerance thresholds?”

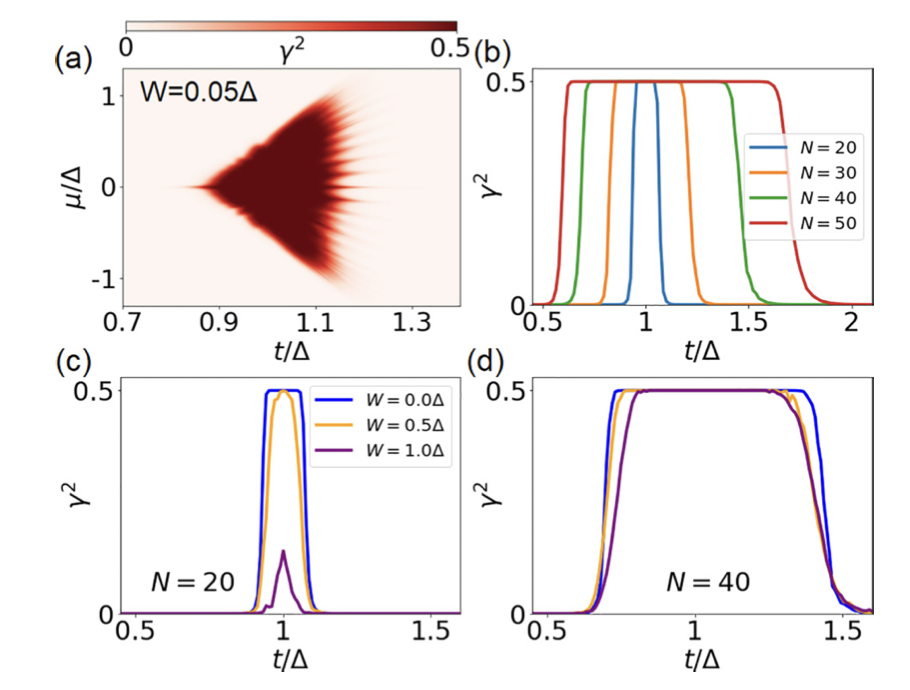

Recent examples make the point. In March 2026, Quantinuum demonstrated quantum computations using up to 94 error-protected logical qubits on a trapped-ion processor, achieving “beyond break-even” performance. The work is partially fault-tolerant rather than fully so, relies on postselection, and is a step in a longer sequence rather than an endpoint.

IBM’s Quantum Loon processor, unveiled in November 2025, demonstrated all hardware elements needed for fault-tolerant quantum computing on a single chip. Similarly, Google’s Willow experiment in December 2024 showed below-threshold quantum error correction, meaning adding more qubits improved rather than degraded the computation. None of these is a final answer. Each is a rung on the ladder.

How to Read Quantum Announcements

Public announcements often mix demonstrated results with projected milestones. Interpreting them requires separating what has been achieved from what is planned.

A useful starting point is independent industry analysis. Coverage from The Quantum Insider and similar research-focused outlets emphasizes verified progress and technical context rather than headline claims.

Apart from that, when evaluating an announcement, several checks help:

- Whether the result is tied to a specific benchmark or a general capability

- Whether the outcome depends on postselection or discarded runs

- The number of logical qubits achieved and the associated error rates

- Whether a roadmap milestone is demonstrated or projected

Reading the underlying paper also provides more clarity than relying on summaries. For ongoing developments and broader context, tracking primary sources and researchers in the field can help maintain a grounded view of progress as well.

Where the Field Stands Today

Quantum computing in 2026 is simultaneously more impressive and less immediately useful than most coverage suggests. The progress on error correction is real and historically significant. The longer-horizon trajectories, pointing toward useful fault-tolerant systems by the end of the decade or shortly after, are becoming credible.

Preskill, writing in 2018, put the situation well: “Because quantum computing technology is so different from the information technology we use now, we have only a very limited ability to glimpse its future applications, or to project when these applications will come to fruition.”

Most of the noise in public discussion of quantum comes from treating an open engineering problem as either already solved or permanently stuck. It is neither. Progress is being made. The myths and the fake news are both still out there, and anyone stepping into the field for the first time will run into both. The goal is not to guess which one wins. It is to read carefully enough to tell them apart.