Insider Brief

- Researchers demonstrated a scalable method to simulate quantum error correction at near-experimental scale using classical cloud computing, addressing a key bottleneck in building reliable quantum computers.

- The approach uses a Monte Carlo–based digital twin to model a 97-qubit surface code system, capturing complex and correlated error patterns that simpler simulations miss.

- The results suggest more realistic simulations could improve error correction design and hardware-software co-development, though further work is needed to model full systems over many cycles and match specific devices.

In a week that has seen a parade of error-correction advances, a team of quantum computing researchers say they have shown a practical way to simulate large, error-prone quantum systems on classical computers, an advance that the scientists suggest could speed the path toward reliable quantum machines.

A collaboration involving Amazon Web Services, Quantum Elements, the University of Southern California, and Harvard University reported that it can model a key quantum error correction system at near-experimental scale using cloud-based high-performance computing. The work, discussed in an AWS technical blog, addresses understanding and correcting errors that accumulate as quantum systems grow, what’s considered on of quantum computing’s most restrictive bottlenecks.

Quantum computers rely on qubits, which are fragile and prone to errors from environmental noise, imperfect control and interactions between qubits. To make them useful, researchers must use quantum error correction, a process that encodes a single “logical” qubit across many physical qubits and continuously checks for mistakes. The challenge is determining how large and how precise these systems must be to reliably perform computations.

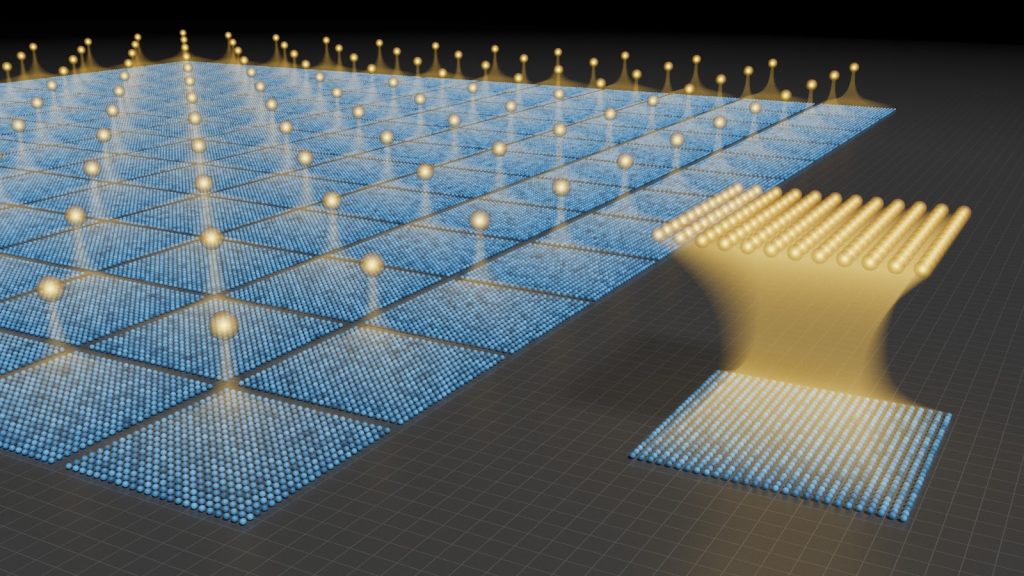

The team’s approach centers on so-called “digital twins”. Basically, digital twins are computer simulations designed to mirror the behavior of real quantum hardware, including subtle sources of error. According to the researchers, accurate digital twins could help engineers test designs, improve error correction strategies, and predict system performance before building physical devices.

One of the main problems is scale because simulating quantum systems becomes exponentially harder as the number of qubits increases. A full, exact simulation of a 97-qubit system would require tracking an astronomical number of variables, far beyond the reach of conventional computers.

The researchers report that they overcame this limitation by combining a statistical simulation method with cloud computing infrastructure. Using Amazon EC2 high-performance computing instances, they simulated a “distance-7 surface code,” a standard error correction scheme involving 97 physical qubits. The simulation captured both simple and complex error types, including those that arise from interactions between qubits and timing mismatches in control signals.

According to the team, the simulation ran in about an hour on a single compute node, which would be orders of magnitude faster than traditional methods would allow.

Techniques

The approach builds on a technique known as quantum Monte Carlo simulation. Instead of calculating every possible state of the system, the method uses a large number of probabilistic “samples” to approximate the system’s behavior. This reduces the computational burden while preserving key features of the system’s noise.

In practical terms, the method tracks how errors evolve during a quantum circuit — such as when qubits interact or are measured — and produces data similar to what an actual quantum device would generate. This includes “syndrome” measurements, which are signals used to detect and correct errors.

The researchers say their method retains details that simpler models often ignore. Traditional simulation tools frequently assume errors behave randomly and independently, but real quantum devices exhibit more complex patterns, including correlated and phase-sensitive errors. These effects can significantly impact how well error correction works.

By capturing these details, the digital twin can produce more realistic data for testing error correction algorithms, including advanced approaches such as neural-network-based decoders.

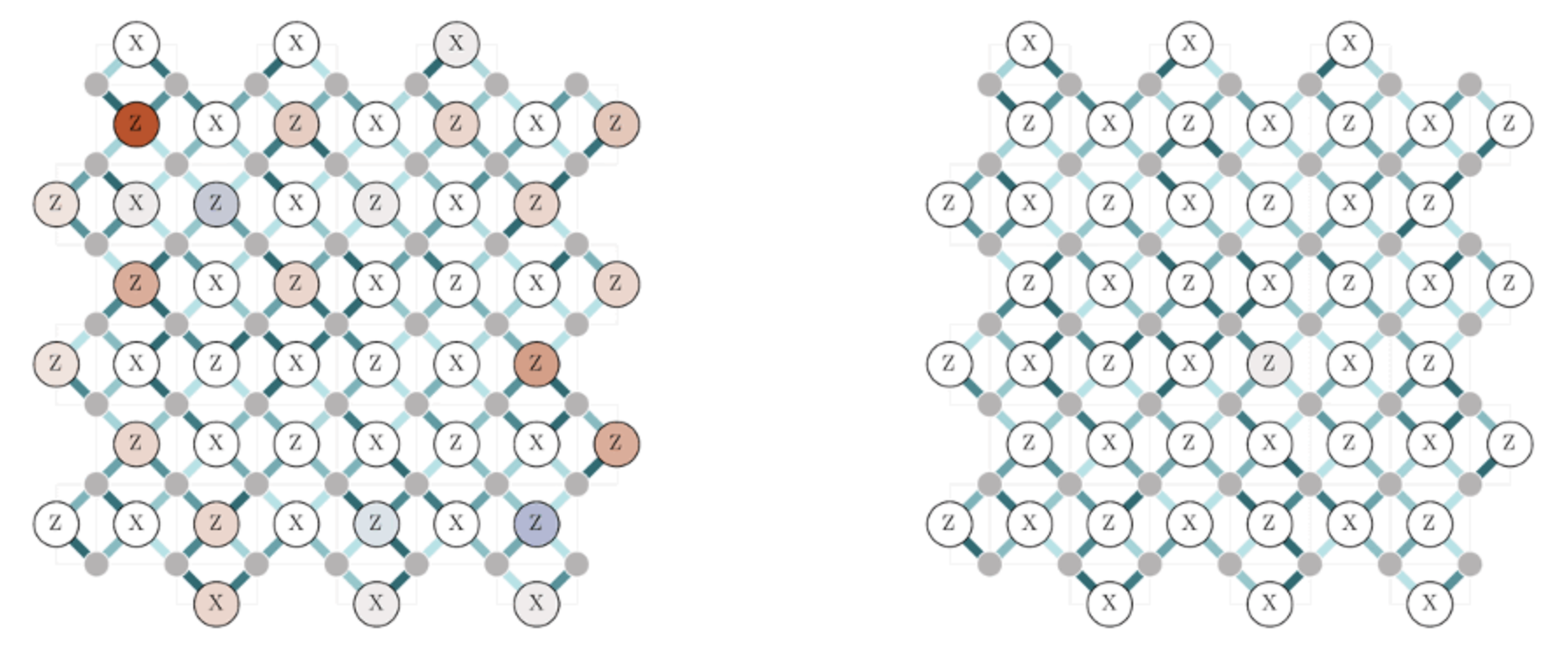

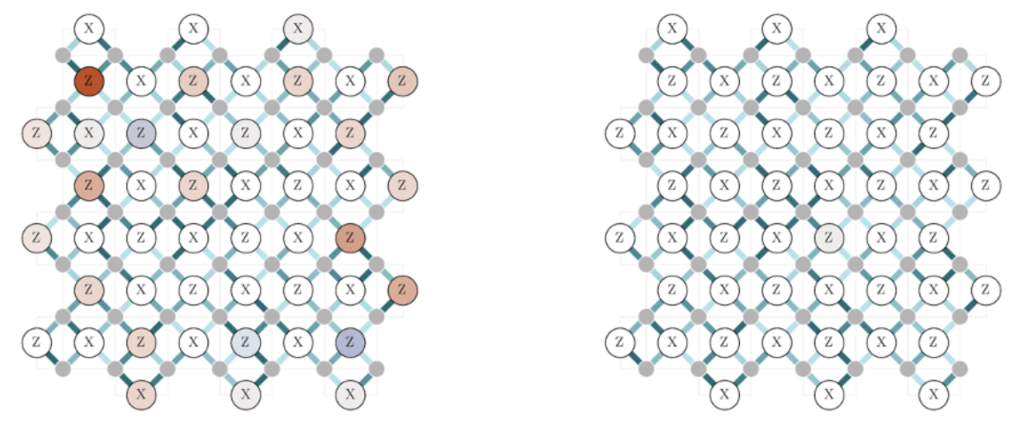

One of the study’s key findings is that realistic simulations reveal structured patterns of error that simpler models miss. For example, when the researchers varied the frequency of control signals applied to qubits — a common source of hardware miscalibration — the simulation showed spatially varying error patterns across the system.

By contrast, a widely used simplified simulator predicted a largely uniform response, failing to capture these nuances.

According to the researchers, this difference is important because error correction depends on recognizing patterns in measurement data. If the model used to design or train error correction software does not match the actual hardware, the resulting system may perform poorly.

The ability to generate realistic data at scale could help close this gap. Engineers could use digital twins to train and test error correction methods before deploying them on physical devices, potentially reducing development time and improving performance.

The work also highlights the growing role of classical computing infrastructure in advancing quantum technology. Rather than replacing classical systems, quantum development increasingly depends on them for simulation, optimization, and control.

In the paper, the researchers report that the simulation modeled a surface code circuit involving nearly 400 quantum operations, including single-qubit and two-qubit gates arranged in multiple layers. The researchers incorporated a range of realistic noise sources, such as energy loss, dephasing, and unwanted interactions between qubits.

They also included small calibration errors in control pulses to reflect imperfections in real hardware.

The simulation tracked both the measured outputs of the system and the underlying “true” state of the qubits, something that is not directly accessible in experiments. This allowed the team to compare how accurately the system’s measurements reflected the actual errors.

To ensure statistical reliability, the researchers ran multiple independent simulations and averaged the results.

Limits and Future Work

It’s important to note that although this is an advance, the approach remains an approximation. Like all Monte Carlo methods, it relies on sampling, which introduces statistical uncertainty. Increasing accuracy requires more samples, which in turn increases computational cost.

The noise model used in the study, while realistic, was not calibrated to a specific quantum device. As a result, the findings demonstrate general capabilities rather than performance on a particular system.

The simulations also focused on a single round of error correction. In practice, quantum computers must perform many such cycles, and errors can accumulate over time. Extending the approach to longer computations will be an important next step.

The researchers say they plan to expand the digital twin framework by incorporating richer and more device-specific error models. They also aim to use the simulated data to develop and evaluate more advanced error correction software.

Another goal is to link improvements in simulation and decoding directly to measurable gains in hardware performance. This would allow researchers to test design changes in simulation before implementing them in physical systems.

The broader implication is a tighter feedback loop between hardware and software development in quantum computing. By aligning simulations more closely with real devices, digital twins could help guide decisions about architecture, calibration, and system design.