“The Superposition Guy’s Podcast”, hosted by Yuval Boger, CCO at QuEra Computing

Natasha Sachdeva and Michael Biercuk from Q-CTRL are interviewed by Yuval Boger. They discussed their groundbreaking research on combinatorial optimization problems, achieving significant results with Q-CTRL’s error tools and modified algorithms. Michael highlighted their dual focus on quantum computing and sensing, emphasizing the practical applications of their technology. They also addressed the practical implications for businesses, insights into their future directions and reflections on the broader quantum landscape, and much more.

Listen on Spotify — here

Full Transcript

Yuval Boger: Hello Natasha, hello Michael, thank you so much for joining me today.

Natasha Sachdeva: Thank you for having us.

Michael Biercuk: Great to be here, Yuval.

Yuval: So Natasha, who are you and what do you do?

Natasha: So I’m Natasha Sachdeva, I’m a senior scientist at Q-CTRL and I work on quantum optimization, so specifically exploring what’s currently possible out there to achieve using current hardware, using Q-CTRL’s error tools, and combined with the latest research in quantum algorithms.

Yuval: And Michael, you almost don’t need any introduction, but who are you and what do you do?

Michael: That’s very, very kind of you. My name is Mike Biercuk, I’m the CEO and founder of Q-CTRL. My background is in building quantum computers and other quantum technologies, and it’s been an exciting ride for the last almost seven years running Q-CTRL, trying to make all of that hardware useful through software.

Yuval: I think we last spoke on this podcast about a year and a half ago, so maybe I start with you, Michael. What’s new in Q-CTRL during that time?

Michael: Yeah, it’s nice to get to catch up again. Of course, we’ve seen each other at lots of events around the world, so everybody’s always on the road, which is an exciting part of our job. I have been out there singing the gospel. There have been a huge number of developments on our side, both in quantum computing and in quantum sensing, where we have focused on how this kind of technology can be brought to reality through infrastructure software. So one of the biggest announcements was in December of ‘23 we announced and went live with our infrastructure software for performance management inside IBM’s quantum services and at the same time we’ve been brewing quite a lot in the way of software-ruggedized quantum sensors, using software to make quantum sensors perform well in the field. We announced some exciting stuff at The Economist in London, and there is much, much more to come in that area as well.

Yuval: Just to follow up on that, some investors preach focus, and you’re doing both sensing and computing. I realize that’s coming from the same underlying technology, but do you think that at some point in the future, you might have to choose one of them?

Michael: From the beginning, our argument was always that quantum control deployed as software was the means to make this kind of technology useful. And we knew that one single vertical, quantum computing, was not everything. It was not the be all, end all, despite the level of investor interest that really peaked in that area. So we began several years ago, it’s the end of 2020, investing very heavily in how the same kind of technology could be deployed in quantum sensing. For us, it’s just another application vertical, much like quantum computing can be deployed in biophysics or biotechnology, as well as logistics and optimization, as well as things like quantum machine learning. We see it simply as another outlet for application of our core technology, so we do feel quite tightly focused. But it’s an exciting opportunity that we get to have this earlier win, if you will, because quantum advantage has already been achieved in quantum sensing, and it’s really much more about taking these technologies into the field.

Yuval: You’ve recently published a paper that’s making a lot of waves, no pun intended. And Natasha, maybe you could describe the key takeaways from that paper?

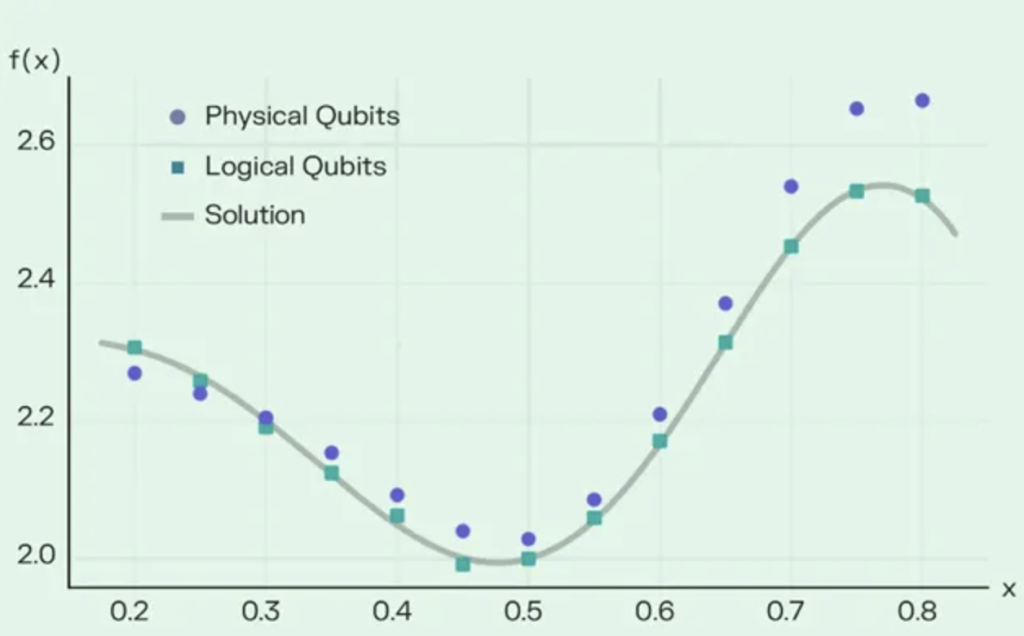

Natasha: Yeah. So in this paper, we presented two successful demonstrations of some combinatorial optimization problems. So in order to do that, we modified a well-known classical and quantum hybrid algorithm to be able to solve these two problems, which is Max-Cut and an Ising spin-glass problem. And what we were able to find is that using this modified algorithm combined with Q-CTRL’s error tools, we were able to find the optimal solution for a large number of problems. And basically, these are the largest demonstrations that we know of at least for this type of algorithm, successful demonstrations of this type of algorithm.

Michael: Yeah, that’s a really important point that Natasha got to at the end there. There have been lots of demonstrations of running QAOA. There have been lots of demonstrations of trying to solve these various optimization problems like Max-Cut. What’s kind of a dirty secret is that for the most part, running the algorithm does not result in finding the correct answer. It is simply a demonstration that the algorithm can run. And we went quite a bit beyond that, and Natasha’s work really delivered some exceptional outcomes, finding the right answer with exceptionally high probability.

Yuval: You mentioned two parts, I think. One is modifying the algorithm and then using your quantum control capabilities. What happens if you use just one of them? If you just modify the algorithm but don’t run quantum controls or just run quantum controls on an existing QAOA algorithm?

Natasha: So we’ve definitely tried just using the standard QAOA algorithm with Q-CTRL tools. We definitely see an improvement over existing published results as well using a standard QAOA algorithm, just using our error suppression tools, but not the dramatic results that we were able to achieve. So the error suppression definitely helps us quite a bit, but we definitely need the extra push that we get with these additional modifications, which is this modification of the QAOA circuit and this additional classical post-processing that we use.

Yuval: And what happens if you just run the modified algorithm without the quantum controls?

Natasha: I don’t know that we’ve actually done that test, but I assume that it’s going to be very similar where we’ll still achieve maybe better than what you would get with standard QAOA without the error suppression, but maybe not quite as dramatic as what we were able to achieve.

Michael: We did show that when you run QAOA without the core error suppression aspect, the outcomes of QAOA are indistinguishable from random selection. And that was a really important finding. We knew that there was a whole series of innovations that had to be drawn together in order to achieve the key outcome of actually solving the problem correctly. And really the first thing that we noticed was when you just ran the out of the box hardware, what you got was no different than random. And that was a sign that there was some work to do even at the hardware level.

Yuval: If I’m a business executive at a company that does not do quantum computing, so I’m an enterprise customer, I read the headline about your work, what is my takeaway? What does that mean for my business?

Natasha: So these tools basically can solve problems that are relevant for industry. Max-Cut is a problem that shows up all the time in networking and logistics. And so these are relevant problems for business use cases. And what we’ve shown is that we are getting very close to an era where these tools can really compete with classical methods. And I do think there’s a point to be made about the versatility of the tool that we’ve created, that you don’t need to know a lot about the type of problem, the types of algorithms that work for a particular problem to use Q-CTRL’s Solver tool.

Michael: Yeah, to us it was also really exciting that we could solve problems that were limited not by the performance of the hardware, but rather by the size of the hardware. We were really pushing right up to the total number of quantum bits that were available to us. And that said to us that even though we are still in a regime where the problems are reasonably small, and you can take a standard optimizer like CPLEX and you can solve the problem without much challenge, the fact that we can get the right answer with effectively unit probability at full device scale for these classically non-trivial problems says to us that as the devices get bigger, there’s a really enormous opportunity ahead saying, “Well, maybe we’ll be able to finally achieve quantum advantage in not the fault-tolerant regime necessarily, but perhaps before that or with some hybrid of a small amount of error correction in order to deliver really useful results for business.”

Yuval: These are QUBO problems, I think, and they have a certain number of constraints. How many constraints can you solve given current hardware? I think you tried it on a 127-qubit machine or something like that.

Michael: Yeah, just to be clear, we solved both QUBO and what are called in a kind of funny name, HOBO problems. These are higher order binary optimization. The particular versions that we were solving were not constrained optimizations. They were unconstrained, although future iterations of the tool will enable the addition of constraints. But Natasha can say some more about the types of problems that we were solving.

Natasha: So we solved these two problems, the QUBO problem and the higher order optimization problem. So the relevance is that the QUBO problems are ones that are, I think, more easy to solve with current technology. The HOBO problems require typically various other applications, the addition of constraints or slack variables in order to be able to solve them. And so one of the advantages of our method is that we don’t need to do some sort of problem-specific representation of the problem in order to be able to solve it on these devices. You can sort of input the problem at any order and the solver is able to address it.

Michael: But it was really cool that even in the Max-Cut, appreciating that these are the simpler class, if you will, of problems. The first thing to say is these were non-trivial. So these were so-called pre-regular graphs for the experts out there. They’re graphs where each node is connected to three or more neighbors. We actually pushed a much higher density. So for those of you in the audience who are familiar with these, we went up to seven regular graphs and were still able to, with unity probability, find the correct answer. That was really quite exciting because previously a lot of the demonstrations in the literature would be almost trivial implementations of Max-Cut, linear problems and the like. So going into the regime of problems that we know are actually hard classically, even though we’re solving on the easier side of hard, was a great outcome from the team.

Yuval: Approximately, for how many constraints could you solve with the current hardware and what does that number need to be to be truly useful to our business?

Natasha: So again, we mostly worked with unconstrained problems. With constraints, there’s definitely more development to be done. And I think currently when you add constraints, it is kind of difficult to achieve the results that we’ve had at the certain device scale, at the 100 plus qubit device scale. But it is definitely possible to encode constraint problems that are again relevant and useful at that scale.

Michael: Yeah, so one very cool thing also is that you can, with a gate model machine, directly encode a constrained optimization or a higher order optimization. There are not these ideas of slack variables. It’s obviously a different circuit. It’s a different application that you run and it will be much, much more complicated. But it seems to lend itself in a way that’s more efficient than some alternate architectures that have been explored.

Yuval: Speaking of alternate architectures, I think the paper compared it to annealers, what was the finding regarding the annealer comparison and what does it mean for people who use annealers today?

Michael: I think the top line was that we looked at the published literature and there was some really, really nice work from a team at Los Alamos that had studied the Max-Cut problems and the higher order binary optimization problems. We actually used exactly their problem instances that they were kind enough to share with us. We liked them because they did a huge amount of testing on systems that were gate model, but also systems that were annealer based. And we were able to show in some of the problems that were shared with us that starting from where they were, which was running QAOA and not actually being able to find the correct answer when the annealers were consistently getting pretty reasonable results, with the solver that Natasha was talking about just a little while ago, we were able to actually find the correct solution to these higher order problems, even when the annealer could not. And in the cases where both found the correct answer, the likelihood of finding the correct answer was much, much higher with the solver that we were talking about.

Now we were excited that an annealer company came out and followed up. Most importantly, we were excited that they validated the results. They actually showed on the same exact problem instances that as expected, we would be able to find the correct answer even in some cases where they could not. Now we were very clear in the paper and there’s a whole long paragraph at the end that gives all the caveats about what we do and don’t claim. We want to be so clear that we don’t say that this is a definitive outcome in which gate model machines will always beat annealers. No, this is one set of problems in which we have seen these positive outcomes from gate model machines. It doesn’t mean you can’t do better in the future with an annealer. It doesn’t mean annealers can’t do better on other problems. That’s none of our set of claims. But it had been the conventional wisdom based on the results that were coming out in the literature that gate model machines were simply uncompetitive, that they were orders of magnitude behind what annealers could do in problems. And the demonstrations that Natasha and her team put forth show that’s just not a true statement anymore.

Yuval: To what extent is this applicable to other algorithms beyond optimization? So for instance, could I use the same thing to improve the performance of a quantum machine learning job or other types?

Natasha: So in a lot of areas, especially like the parameterization that we explored, there are overlaps with quantum machine learning. It’s definitely an open research area at Q-CTRL, something that we are actively looking into. So there is some overlap for sure.

Michael: And we’ve also seen that core bits of what we put together in this quantum solver have already been deployed for problems in machine learning. Our friends at BlueQubit, our friends at Softbank who are partners and customers of ours, they have used our error suppression pipeline and a product called Fire Opal to get better outcomes in the quantum machine learning problems that they were studying.

What was pretty interesting about this particular quantum solver is we put everything together end to end such that for a user, it’s really one command. It is generally a very substantial amount of overhead to start from a problem definition, go through the correct ansatz for the QAOA circuit, the correct implementation, the correct selection of an error reduction strategy of some kind, the correct classical loop in the hybrid algorithm. All of these things typically need to be built by you, even if you use an SDK for it. In our implementation, it’s really just one command and everything has been configured for hardware optimization in the background.

Yuval: Is the implementation just a paper or is it a product? Can I use it today?

Michael: It’s available. It was available the day that the paper went on the archive. So anybody can use it via our Fire Opal product.

Yuval: How about hardware that’s not made by IBM? Gate model hardware that’s made by other vendors?

Michael: We’re very excited for more vendors that are going to be appearing as supported backends later in this year. So keep your ear out. We have to give credit to IBM for being quite front-footed on building a really robust, and flexible API that allowed us to build on top of it, not just us, many others. And it’s been exciting to see other vendors providing that as well, like QuEra. Our friends at Rigetti have had a pulse level API for a long time. And as these systems become more widely available, we’re very keen to bring them into the fold.

Yuval: Natasha, now that this paper has been published, what are you working on next?

Natasha: There are so many open research directions. I think there’s quite a lot of algorithms out there that we can expand our solver on. There are other optimization strategies, other ansatzes. There’s other… We mentioned the quantum machine learning direction. So there’s just an open landscape of different directions that we can pursue. I think improving upon these results is definitely a major goal. Supporting constraints is another major goal for us. But yeah, just tackling more and more difficult problems, scaling up as much as possible as larger hardware becomes available, and so on.

Yuval: Mike, if you had to look into your crystal ball, at what point in the future does this become truly advantageous to a business that’s using Gurobi or using some classical solvers?

Michael: Yeah, it’s obviously the $64 billion question or whatever it might be in inflation adjusted terms for those who get the joke out there. I think we’ve been looking at this problem from the end use perspective. And that is not asking so much what is a theoretical proof of what is the threshold for quantum advantage, but rather what is the practical area where we’re seeing solvers that are available starting to fall over. I’ve been a little surprised in certain ways. So one surprise for me was that for a lot of commercially relevant problems in transport and logistics, even the heuristic solvers leave quite a lot of room on the table at quite modest scales. So these are scales of not thinking about schedules of millions of passengers and thousands or tens of thousands of vehicles, but hundreds of passengers and a dozen vehicles. At these very, very small scales, the problems are already quite hard and the heuristics can leave something behind. So that’s encouraging.

But then the other part, frankly, is that a lot of applications have really been underserved by the classical software set. We did some work with the army in Australia and they were excited in particular because even though they’ve had the same problem for a couple of decades effectively, which is managing logistics for a military exercise and obviously for real military activities in other cases, there was no classical solver that would solve the problem in a way that worked for them, that met the constraints that were relevant to them. So this combination of three things. One of course is the hardware is accelerating. We’re all seeing that from QuEra, from others, tremendous progress. Number two, the realization that the opportunity for advantage is not necessarily at the largest possible scales, but at much more modest scales. And third, the identification of areas that have been historically underserved even by classical technology where all they want is a solution. They don’t care necessarily if it’s quantum or classical. We feel that it is at least conceivable and possible that this can really happen, with continued development in the hardware, continued innovation in the software, within the next three to five years.

This is obviously a contentious point. We know that there’s a lot of debate about whether it’s feasible at all to realize quantum advantage before fault tolerance. I would say our view has been a little bit more holistic that instead of the purity of fault tolerance, if you will, we are after something that is fault-tolerant enough, in air quotes, to solve a problem that really matters. And we do think that’s coming soon.

Yuval: You mentioned the paper or counter paper by the annealing vendor. To the best of your understanding, what are they claiming?

Michael: Well, there’s obviously been a bit of discussion online. The first claim was that actually it was just the classical solver, which was solving the problem. And after a small bit of discussion, we showed that that’s not true. The classical solver that they referenced is called CMA-ES. That’s the classical part of the hybrid loop. It’s resetting the so-called QAOA circuit parameters.

The next claim was effectively, okay, this quantum solver can actually solve the problem. They were good enough not only to validate the higher order binary optimization problems that we talked about, this Ising spin glass, but they also showed that even in the Max-Cut regime where we did not expect to be even close because for Qubo annealers are great, that actually we were quite competitive within a factor of order unity in terms of the probability of getting the correct answer. So that was an interesting surprise to me.But then they said, “Well, really we believe that you should evaluate based on a different metric.” And the metric would be a metric that was trying to quantify how long it takes to reach a solution of a certain quality. I would say there’s a fair bit of debate about the new metric that they introduced. With a very small amount of back of the envelope calculation we showed that you can game it by orders of magnitude with simple tweaks that actually make the runtime longer. So in a practical sense, you can make the whole thing slower, but you can make the time to solution metric better. It’s in our view not relevant. We didn’t make any claims about time to solution. We lay out in as matter of fact a way as possible in the appendix of the manuscript how long we knew it took us on the IBM machines and how long we believed it took on the annealers based on the previously published results. They gave additional detail on that. And frankly, it’s up to the user to decide what is the right metric. All we talked about was the fact that it was actually possible to get the correct answer out of a gate model machine, and that has not historically been the case.

Yuval: How long did it take to get to a solution in that paper? I must have missed the appendix.

Natasha: So it depends on the problem size. So for up to around a 32-qubit problem, the total wall clock time is around six minutes. And then for the largest problems that we investigated, the wall clock time was around 20 minutes. The QPU time for each was I think around 150 seconds for the smaller problems. And then for the larger problems, I don’t quite remember, but I think on the order of around eight minutes or so.

Michael: And that’s end to end. That’s everything. That’s from here is my problem definition to here is the answer. There’s no extra processing or pre-processing or embedding or problem manipulation. There’s nothing else to do. Once you define the graph, it just runs.

Yuval: Where’s the classical time spent? I mean, I know you’re doing a little bit of greedy post-processing. That surely doesn’t take a lot of time. So is it on the front end?

Michael: It’s the magic API black hole. It’s on the hardware side. When you send a command, it takes time for it to return.

Yuval: By the way, for transparency, we’re happy to have the annealing vendor or any other vendor come to this podcast and discuss their view of these results or other results. So this is not specific to Q-CTRL. We’re very happy that you accepted this invitation.

Michael: Sure. Can I just say, Yuval, one really important point from us. We did not set out in any way to go after any particular vendor or any particular architecture. We simply reported something that was new. The new thing is that you could run an algorithm for quantum optimization on a gate model machine and get better results than anything that had been previously published before. So we are convinced that there will be continued innovation in the gate model side and in the annealer side that will yield better results and it’s likely to be an iterative process whereby who ultimately wins, it’s not our business. We just did a science experiment and built a product out of it for delivering value to end users.

Yuval: I would be remiss if I didn’t ask, since we last spoke, did anything happen in Quantum Australia? I mean, is Australia just dormant as it relates to Quantum or what’s your view of the current situation?

Michael: Absolutely nothing going on here. Nothing to see here. As you clearly know, there was an exciting announcement from the Australian government that they were backing with very bold ambition a vendor of quantum hardware to build fault tolerant quantum computers. Obviously this has been the topic of a lot of discussion. We think it was great that the government could get behind actually picking a winner and doing something big and bold. What we’re excited about now is actually seeing them invest in the local community. As you know, the company in which they invested was not an Australian company, it was an American company. Now, the next stage is building up the local ecosystem and we’re very keen to see that happen.

Yuval: As we get close to the end of the session, Natasha, what was the most surprising thing for you in this work, in the published work?

Natasha: I think we actually were not expecting to be able to find the true ground state energy of these spin glass problems. I think that was actually a huge surprise for us. We were just trying to see what we could possibly get. We didn’t expect the results that we saw. I think the Max-Cut problems, we were definitely excited about what we had seen previously and we were just scaling up to larger and larger problems. But for the spin glass problem, there’s cubic terms, it’s a bit more complex. So our results were definitely very exciting and unexpected, but welcome.

Yuval: So if I could also ask you a hypothetical, Natasha, and then maybe Michael, if you could have dinner with one of the quantum greats dead or alive, who would that person be?

Natasha: It would probably be Paul Dirac. I think he’s up there, in my eyes, with Einstein and greats like that. And I think he’s just known to be an interesting character that would be interesting to have dinner with and see his take on the current developments in quantum computing, quantum sensing and just how much the quantum field has evolved in the last 100 years.

Yuval: And Michael, for you, and no peeking at what you said a year and a half ago.

Michael: Well, the first name that comes to me, I think it was the same name, but I’m not sure. I’ll say Marie Curie. And it was not just because of her work, it’s because of some of the public writings and the thought that she showed about the way science engaged with the world. And she has my all-time favorite quote. I actually use it on Christmas cards, as some people know: “Nothing in life is to be feared, it is only to be understood. Now is the time to understand more so that we may fear less”. And I think it’s a really apt message in the times in which we find ourselves today.

Yuval: Wonderful. Natasha, Mike, thank you so much for joining me today.

Natasha: Thank you.

Michael: Thanks, Yuval.

To subscribe to the audio podcast, please Spotify here