Exposing the emptiness of the h-word: how claims of hyperbole ring hollow; and how nobody, not even today’s experts, can possibly know the limits of Quantum.

By Shai Phillips

President, PSIRCH

In a monthly segment in The Quantum Insider, PSIRCH’s President, Shai Phillips, will conduct a first-of-its-kind audit of broad industry-internal accusations of exaggeration in quantum computing and associated fields. How much truth there is to them will be the focus of this series.

“In 2019… a former colleague told me anyone who said there would ever be a quantum computer with more than 100 qubits was a liar. Maybe Something to keep in mind when judging progress in the field.”

Dr Joe Fitzsimons, CEO – Horizon Quantum Computing

- Expert views in Quantum support the outlook that quantum computing is set to change the world, meaning far-reaching generalized projections regarding Quantum are valid and not hyperbolic.

- There is strong evidence quantum computing will aid in artificial intelligence/ machine learning, including with exponential speedups when quantum data is involved, in training AI, and in generalizing, thus some skeptical statements by experts do not tell the full story, and can be misleading to various audiences.

- Even expert claims of hyperbole, largely due to the impracticality of qualifying all remarks, can be inaccurate, ironically hyperbolic, and have the potential to retard progress in Quantum. Thus, a cessation of such claims will be beneficial.

Part IV – A New Paradigm

For various reasons, many quantum experts who participated in conversations that led to the findings and discussions in this series were not able to be quoted by name. I am truly grateful to these unsung heroes for their valuable help in getting to the bottom of some highly complex subject matter. This series is dedicated to them.

RECONSIDERING THE RUBRIC

In the last two segments of this series, we conducted a surface audit of computational complexity theory, demonstrating how it has been inaccurately used to make claims of so-called “hype” in quantum computing – claims which, ironically, were thus hyperbolic themselves. This month’s column will be the last in the series to touch on complexity theory, asking how apt it is when faced with a new computing paradigm. We hope you will tune in next time also, as we turn to considering possibly the biggest debate today in quantum computing: its ability to aid in machine learning and artificial intelligence. Spoiler alert: there is very compelling evidence that it will. After that topic, we will delve into the mainstream world of pop-cynicism to consider claims of exaggeration in quantum made on popular social media sites, to see just how misleading they can get.

In flippantly invoking the h-word, one thing academics and theoreticians almost never ponder is whether complexity theory is even the best rubric to use as we enter an era of unchartered territory. So established is this lens by which computer science is viewed, that its adequacy in the face of a new computational paradigm is barely questioned. Keep in mind, this is the same model that still has not been able to reach a resolution on whether even classical complexity classes are equivalent: the infamous ‘P vs NP’ matter remains unresolved. Aaronson’s view on this, when asked, was that it remains at least one necessary lens through which we must peer. It may not be a stretch to imagine therefore that Aaronson’s views are usually colored by the tint of this rubric, while new engineering, new physics, new hardware, and new minds are brought to bear.

It’s interesting to note that, when describing complexity classes via this model, class definitions can be utterly dependent on one another: NP-Complete, for instance, has no independent description. In order to define it, one must inevitably characterize it in relation to another complexity class: e.g.: “an algorithm for solving it can solve any NP problem.” It makes sense to most computer scientists, of course, but from an outside perspective, it’s a bit like describing a car as a small van, and a van as a large car – a circular characterization that provides little clarification. Still, this is just a quirk, and there are more important items on the menu, such as other rubrics out there which may add value as we enter the era of quantum computing. Such other rubrics do not necessarily serve to replace the model of computational complexity theory, but do at least shed light on the fact that there is indeed controversy as to which models may be most useful in embracing the new quantum era.

One such rubric is Categorical Quantum Mechanics and, by extension, ZX(W)-calculus, pioneered by Bob Coecke, who is now the Chief Scientist at Quantinuum and affiliated with the Perimeter Institute in Canada, as well as Samson Abramsky, and Ross Duncan, in 2004 and 2008 respectively. Deriving from monoidal category theory and algebraic topology, it is a dramatically distinct approach to thinking about quantum information, and has since been widely adopted. While Coecke and others swear by this graphical language, Aaronson is less enthused, and professed to me that he views it rather as a far lengthier method of describing pretty much the same thing.

Contrarily, a recently published paper by Coecke et al., at Quantinuum and at Oxford University, took this model even further, and was able to generalize ZXW-calculus to all finite dimensions, i.e. to ‘qudits’ (multi-dimensional, rather than two-dimensional qubits), proving it to be complete for every finite dimension: “Completeness for arbitrary finite dimensions of ZXW calculus, a unifying calculus”. The paper shows that ZXW-calculus “has advanced how quantum circuits are compiled into photonic hardware architectures in the industry” and has enabled “the development of novel techniques in… quantum machine learning and quantum chemistry”. It thereby establishes itself as “the first completeness result for any universal language beyond qubits.” Pretty impressive.

However, the purpose of raising this is not to promote Category Theory as a winning model, but rather to show there exist differences of opinion in quantum information theory, even among experts, and thus goes to illustrate that even expert views do not equate to fact, especially in such a young, fast-growing field as quantum computing.

Similarly, in another conversation I had with an expert in the field, the limitations of complexity theory were corroborated, and made abundantly clear. Per Dr. Eric Holland, who heads the quantum team at Keysight Technologies: “from a historical perspective with HPC [high performance computing], complexity theory has not previously been able to predict even classical algorithm improvements because, at the time, computers simply weren’t powerful enough to implement them. As data structures and data architectures saw dramatic performance improvements”, Holland notes, suddenly the impossible became possible. And “because these were all due to feats of engineering, complexity theory simply couldn’t foresee them”. Sound familiar?

In truth, the more conversations I’ve had with quantum experts, including computer scientists, the more I’ve found many simply don’t pay much attention to complexity theory these days. They often just don’t care, or sometimes don’t know too much about it. Towards the end of my research, at times I found myself explaining basic elements of it to them, rather than vice-versa. Some described themselves taking a different posture in regard to their work, such as by referring to themselves as “empiricists” and so forth. We can understand this lack of conviction in complexity theory because, useful though it might be in many respects, much of it simply hasn’t panned out into full proofs, and many of its assumptions are still based on conjecture (P vs NP being one example). This sentiment in relation to complexity theory was reiterated various times by quantum experts, and leads to one simple revelation: we shouldn’t rely on it moving forward.

Looking back, we can also see inconsistencies in complexity theory that didn’t become apparent until much later. For example, calculating molecular energy is an intractable problem classically, but not quantumly, as described by Alan Aspuru-Guzik et al. in the paper: “Simulated Quantum Computation of Molecular Energies”, in which it states: “The calculation time for the energy of atoms and molecules scales exponentially with system size on a classical computer, but polynomially using quantum algorithms. We demonstrate such algorithms can be applied to problems of chemical interest using modest numbers of qubits.” What this essentially indicates is calculating molecular energy in complexity theory terms is in the class ‘EQP’. Yet it was formerly thought to be in the class ‘PSPACE’. How many other quantum-efficient problems are similarly hiding in other classes?

(And by the way, a big congratulations to Alan, as not only did he recently attain “the largest single federal research grant ever awarded to a Canadian university”, $200 Million, per Global News, but he also co-founded Zapata AI, which just went public.)

We should also recognize that, while non-experts spend time wondering if we might ever prove the consensus wrong, and miraculously see quantum computers solve np-complete or np-hard problems, there may be other exponentially hard problems classes that aren’t often considered by non-experts. For example, Michael Bremner, Director of the Center for Quantum Software and Information at the University of Technology Sydney, told me that: “#P and GapP are both exponentially hard classes of ‘counting problems’, for which quantum computers give a polynomial approximation, whereas classical computers are not known to.” Dr. Bremner went onto say that “in fact, the beyond classical computations performed to date were built around the differences in the complexity of approximations for problems in these classes between classical and quantum computers.” Our entire fixation on NP-complete and NP-hard problems may thus be merely a red herring. There are other classically intractable problems to consider.

Exponential speed ups for quantum over classical algorithms, and by extension, quantum advantage, are nothing new, even pre-dating the famous Shor’s algorithm for factoring large numbers, such as the Deutsch-Jozsa algorithm to differentiate between classes of functions, with oracle separation of P and EQP complexity classes (closely followed by Bernstein-Vazirani’s, achieving this for BPP and BQP). Equally, Simon’s algorithm solves the hidden subgroup problem exponentially faster than deterministic and probabilistic classical algorithms, making its separation of BPP and BQP exponential, and eventually leading to Shor’s algorithm, which tackles an instance of the problem for finite abelian groups. On the other hand, Grover’s algorithm for search, is known to provide a quadratic speed-up only, and therefore could only lead to a smaller exponential, rather than being able to solve an exponentially hard problem in good time. But we shouldn’t mistake this to mean smaller speedups are somehow useless – far from it. As Saikat Guha, Director of the Center for Quantum Networks at University of Arizona told me: “reducing to a smaller exponential can be highly beneficial”, citing e.g. the vertex-minor problem. “Perhaps not all cases can be solved by an efficient algorithm, but many useful instances can be tackled using quantum algorithms, which keep improving.” (In the next section below, we’ll take a closer look are this concept of problem instances.) Another relevant example is D-Wave’s paper in Nature: “Quantum critical dynamics in a 5000-qubit programmable spin glass” by Andrew King et al. As the paper notes, “simulation of many-body quantum dynamics” is intractable, yet D-Wave achieves a speedup over classical for optimization problems. The paper states it “must estimate ground state energy [which is] NP-hard for cubic lattices”, then expounds on a useful (though smaller-than-exponential) speedup. There are some prominent papers, notably including scientists from Microsoft and Google (e.g.: Troyer; Babbush) claiming a need for greater than quadratic speedups, and we’ll get to those too. But circling back to the holy grail of exponential speedups, perhaps the most famous is HHL – the quantum algorithm for solving linear systems of equations, and its exponential speedup over classical algorithms in the vital operation of matrix inversion. Given the high applicability of the algorithm to machine learning, however, we’ll reserve discussion on it until the next segment, in a special Quantum Artificial Intelligence edition. Tune in!

APPROXIMATION & PROBLEM INSTANCES

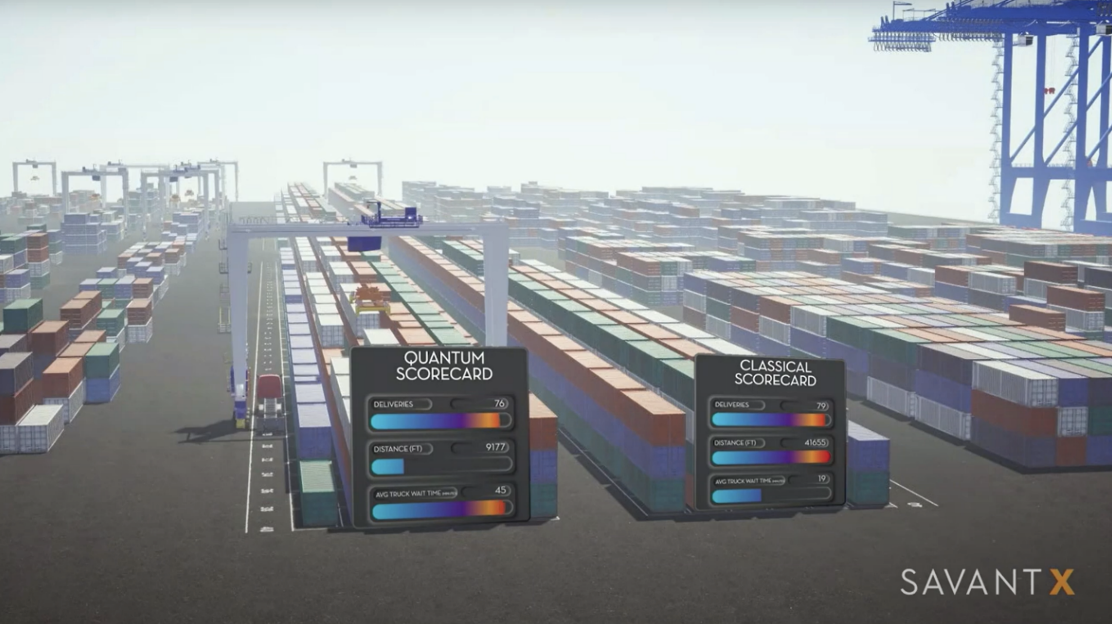

Still, there are two reasons why we might in fact say quantum computers may ‘solve’ NP-complete/NP-hard problems, like TSP. The first concerns approximation, and the second relates to problems instances. Using these frameworks, it is by no means an outlandish assertion. Starting with the first, it’s important to note: approximations can come so close to precision (excluding worst case scenarios), that it’s impractical to care about not being spot-on. If you hit bullseye in a game of darts, but are slightly left of center, do you care? Not really. It’s still bullseye. Worst case scenarios are rarely an issue in tackling practical problems, so the massive ways in which some problems scale need not interfere with useful approximation in the outperformance of quantum computers over classical, as with QAOA (quantum approximate optimization algorithms). It all comes down to how one defines the word “solve”, and as we’ve previously seen, theorists tend to opt for mathematical perfection, whereas quantum computing companies aim for practical commercial benefit. As such, “solve” could be a perfectly good word for such a quantum benefit. Using this definition, quantum computers have been shown potentially capable of solving these exponentially hard problems where classical computers really struggle.

In the second case, it’s also valid to predict quantum computers might be able to solve NP-complete/NP-hard problems exponentially faster than classical computation, if we consider problem instances alone, rather than the entire problem. This is an absolutely crucial distinction: If we solve an NP-complete problem at large, including worst case scenarios, as the problem scales massively, like TSP, we essentially solve every NP-complete problem! (As they all map to each other.) So, it becomes a very bold claim indeed, and very unlikely, as it would not just change the world, but do so pretty much overnight. However, what might be possible is for quantum computers to solve various instances of such problems, entailing exponential speedups over classical algorithms.

Problem instances are concrete problem cases rather than entire problems in the abstract, and are subsets of problems like TSP. They don’t encompass the whole problem in general, just certain cases within that problem (say, of size n). Nonetheless, “it would be very useful to solve certain subclasses of NP-complete or NP-hard problems exponentially faster than classical computers, and would make a powerful use case for quantum computers”, says one proponent of the ability for quantum computers to be able to achieve this: Shengtao Wang, Quantum Algorithms and Applications Manager at QuEra Computing. Shengtao believes that although quantum computers may not be able to achieve exponential speedup to the whole class of NP-complete problems, there could be certain subclasses that are amenable to quantum algorithms achieving an exponential speedup. According to Shengtao, we may be able to: “find exponential speedups for certain optimization problems that have special structures. We are actively exploring if there are some problem instances for which we can find these. For example, certain instances of Maximum Independent Set problems that can be naturally encoded in the neutral-atom platform.” Shengtao’s confidence and inspiration is drawn largely from evidence of previous achievements in quantum algorithms, such as Shor’s and is based on the idea of exploiting the intrinsic structure inside certain problems.

With Travelling Salesman (TSP), there are plenty of variants with identifiable structures that could potentially be exploitable for quantum utility: E.g. Metric TSP; Symmetric TSP; Euclidean TSP. Thus, we may have more reason to be optimistic than the over-cynicism implies. In sum, quantum computers may be capable of ‘solving’ NP-complete/NP-hard problems if the word is not interpreted in strictest sense. I.e. not meaning: completely; generally; precisely; and for all scenarios, including worst-case. Yet, many audiences do not know to interpret this claim in any other way: Laypeople, financiers, equity analysts, young students, and other such stakeholders probably wouldn’t know to expect that approximations might come into play in such a highly useful fashion, nor that problem instances may in fact benefit from exponential quantum speedups, and so the skeptical attitude of some experts, combined with the lack of elaboration and qualification, can tend to be misleading. It’s the attitude, not the facts, that need adjusting, and that’s an easy fix.

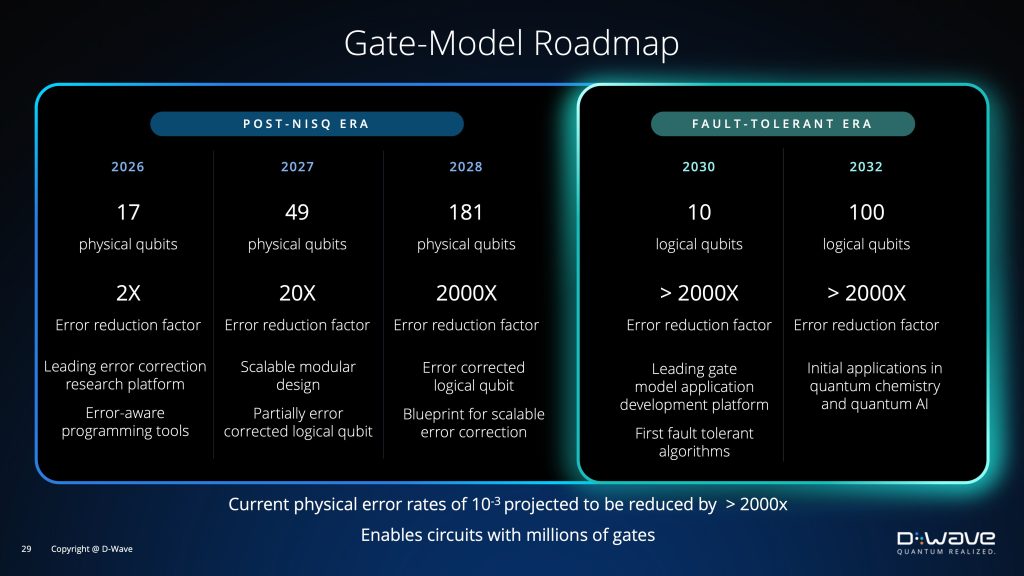

The result of over-cynicism may also go beyond being purely damaging to sentiment re the quantum industry, and may actually end up being quite dangerous. For example, on breaking RSA encryption specifically, there is a pervasive viewpoint that we are light years away from any real encroachment by quantum computers, and so 2035 seems to be the target date for our preparation, per the NSA. However, one video released by the excellent science channel, “Veritasium”, discussing quantum Fourier transform, called: “How quantum computers break the internet…starting now” observes the following: “In 2012 it was estimated that it would take a billion physical qubits to break RSA encryption, but by five years later that number had dropped to 230 million, and in 2019, after more technological breakthroughs, that number plummeted to just 20 million physical qubits.” Admittedly, we are a long way away from that number, but if it keeps decreasing as our physical qubit count goes up, we’re looking at a powerful convergence for which we may just find ourselves wholly unprepared, all thanks to unhelpful overly-cynical attitudes. Extremely recent breakthroughs by companies such as QuEra Computing, Microsoft, and Quantinuum, especially in the area of error correction (which Aaronson himself hailed as a “new bar” in the field in his blog, “Shtetl Optimized”), has only made it more likely that the above decrease in requisite qubit-count will continue, and even accelerate, resulting in an ever-dicier security landscape, as quantum computers become a reality much faster than many will have anticipated.

There is real, honest-to-God nonsense out there about quantum, such as proclamations about quantum healing, and the like, and that’s bad enough. We can call BS on that type of thing, without calling into question earnest attempts to advance the technology too. Otherwise, we tarnish the good with the bad, and paint them with the same brush. This hurts us all: the industry, and all who might benefit from advances in the technology.

THE WINNING NON-THEORETICAL APPROACH – A LOOK AT HEURISTICS

One type of algorithm not yet mentioned is the heuristic kind. Heuristic algorithms are especially important subject matter here, as they take a different approach entirely – they do not attempt to prove themselves theoretically before application, but instead are a trial-and-error method of seeing what works and what doesn’t. They have played a huge role in the progress of computing to date, as some of the biggest breakthroughs have not been sketched out to perfection in theory and then implemented. Instead, they have been “happened-upon” by launching headfirst into hunches and suspicions, then identified in retrospect. Our current state of classical artificial intelligence was arrived at in a similar manner, and much of how it works is still a black box to even the most specialized experts. Like hypnosis, they know it works, they just don’t quite know why, at least not in a detailed sense. Heuristics have thus ever been steppingstones towards incremental progress, and will continue to play this instrumental role in the quantum era. No theoretician will be able to deduce what may come, and as experts bravely and skillfully attempt to plan algorithmic design to perfection on paper, heuristics may leap-frog over any such efforts, and shine a floodlight on blind spots we never knew we had.

I spoke about this with Dr. Steve Flammia, while he was Principal Research Scientist at Amazon’s AWS Center for Quantum Computing at Caltech (now Director of the quantum center at Virginia Tech). Steve called to mind the example of linear programming attempts dating back decades. These attempts addressed various resource constraints, and the endeavor to maximize output in the face of such limitations. Flammia explains: initially, an algorithm was developed that “worked well, but no one could prove it did”. The so-called simplex method was not universal so, in certain cases, “it did not work in a reasonable amount of time. That is because the algorithm still converged, but it took exponential time in some instances.” In the end, a provable algorithm was discovered, but the heuristic version was still utilized because “its runtime remained superior on all reasonable real-world inputs”. “Eventually,” Flammia concluded, “they were able to incorporate the insights from the heuristics (using the ellipsoid method, with polynomial time upper bounds), and now we have the best of both worlds.”

Broadly speaking, heuristics have been dubbed problem-specific, while meta-heuristics are problem-independent, with both playing important roles. As we’ve seen, these types of distinctions can be significant. The aforementioned distinction between problems and problem instances is a key example of how we need to be precise in making such fine distinctions, because when we say: “oh, quantum computers will never be able to solve NP-hard problems, and that’s a fact”, this can be highly misleading, since what it really means is that quantum computers won’t be able to solve all NP-hard problems at once – Sure, it seems unlikely at present that this gigantic feat will occur, but we don’t need to do anything of the sort to make progress! Instead, solving problems instances, which serious experts are working on, is plenty sufficient to make incremental headway. Then, who knows where that will lead us…

(Tune in next time for a special edition, as we look at Quantum Artificial Intelligence.)