Insider Brief

- Scientists say they ran a complex, large-scale programs on an error-corrected quantum computer using 48 logical qubits.

- The work, published in Nature, represents a significant milestone in the transition to practical quantum computing, the team said.

- Several innovations — such as qubit shuttling and zoned architecture — could be critical to paving the way for scalability.

Scientists from Harvard, quantum computing company QuEra, and the Massachusetts Institute of Technology (MIT) successfully ran complex, large-scale programs on an error-corrected quantum computer using 48 logical qubits. Though more work remains, the team of researchers report that this milestone, published in the journal Nature, signifies a significant transition to practical quantum computing.

The team added that several innovations – such as qubit shuttling and zoned architecture – paved the way not just for this academic achievement, but also can create a path forward to scale this into practical applications.

“The progress our Harvard-led team has made in quantum computing represents a collective triumph, born from years of dedicated research and a steadfast commitment to push the limits of technology,” said Alex Keesling, CEO of QuEra Computing. “More than an exciting demonstration, this milestone underscores the strength found in the strategic backing of groundbreaking research. We stand at the beginning of a new era, the era of quantum error correction, poised to address some of the most intricate challenges faced globally. QuEra and our academic collaborators take pride in leading the charge into this exciting new frontier.”

Understanding the Quantum Challenge

Quantum computers hold the potential to revolutionize various fields by solving intricate problems beyond the capabilities of classical computers.

To understand the unique challenge of quantum computing – and how this study makes a critical impact – it’s important to understand the difference between qubits. Physical qubits are the actual quantum bits made from physical quantum systems, while logical qubits are robust qubits formed from groups of physical qubits for error-tolerant quantum computation.

The development of logical qubits is essential for building practical and scalable quantum computers because they can maintain coherence for longer periods, which is necessary for executing complex quantum algorithms successfully. However, as the researchers explain in this work, real-world applications are stymied because the slightest environmental noise – from heat to cosmic waves – affects those physical qubits, leading to corrupted computations – or errors – that limit the devices’ computational abilities.

The proposed solution to controlling that noise, according to the scientists, has been to use multiple physical qubits to represent a single logical qubit as a quantum error correction technique to make calculations more stable and reliable. However, using up all of those powerful qubits to control errors significantly affects the scalability of quantum computers, among other problems.

The Harvard-led team’s work shows another path forward to solving that problem by significantly boosting the number of logical qubits used in an operation.

According to the scientists, in a system built at Harvard, they have realized quantum computation with 48 logical qubits and hundreds of entangling operations. Prior demonstrations were limited to two logical qubits and a single entangling operation, according to the scientists. Moreover, the researchers report an impressive increase in fidelity – the accuracy of a qubit’s state – that surpasses the individual physical qubits, with that accuracy increasing with code distance. In other words, a larger code distance means stronger error correction, resulting in more accurate and reliable quantum information processing.

Industry experts believe that executing large-scale algorithms on an error-corrected quantum computer paves the way for future devices to manage complex computations reliably.

“This breakthrough not only accelerates the timeline for practical quantum applications but also opens up new avenues for solving problems that were previously considered intractable by classical computing methods,” Matt Langione, Partner at the Boston Consulting Group, said in a statement. “It’s a game-changer that significantly elevates the commercial viability of quantum computing. Businesses across sectors should take note, as the race to quantum advantage just got a major boost.”

Shuttling Qubits Efficiently

One of the most important innovations that made this advance possible is qubit shuttling, a technology that Harvard scientists have been working on for a few years, according to the researchers. Unlike some quantum modalities, neutral atom qubits can be moved without losing their quantum state, enabling efficient error correction and simplified circuits, the researchers said.

Traditionally, qubits in quantum processors are like houses in a neighborhood, fixed in place, limiting their interactions to nearby neighbors. Like a quantum mass transit system, qubit shuttling transforms this neighborhood into a bustling city with a network of paths, allowing any house (qubit) to interact with another, regardless of their original location. This is possible because neutral atom qubits, trapped and transported by optical tweezers, maintain their quantum states even when moved.

This dynamic connectivity not only simplifies the creation of complex quantum circuits but also enhances error correction. Imagine a scenario where one can rearrange the parts of a complex machine while it’s running, to immediately fix errors without shutting it down.

Scalability

Another advantage of qubit shuttling is that it dramatically reduces the number of control signals, and thus makes scaling possible.

One way to think about this: a classical central processing unit, or CPU, has memory and registers. The CPU works by moving data from memory to the registers, doing something in the registers, and then putting it back in memory. This is similar to the way the zoned architecture works.

Now, consider that memory in the desktop computer can be doubled or tripled without changing the CPU or increasing the number of registers.

Similarly, most of the control signals in this approach are in the entanglement zone, so the number of qubits in the memory/storage area can be increased without a significant increase in control signals. In contrast, each superconducting qubit requires two to three control signals, so a 10,000 qubit superconducting processor would require 30K signals, all housed in a cryo chamber, which becomes very difficult.

The scientists suggest that qubit shuttling is more than a new technique, it could lead to a totally new paradigm in quantum computation.

The Tip of the Innovative Ice Berg

While the successful integration of qubit shuttling is impressive enough, the scientists added that the study unveiled several innovations that helped set new achievements on benchmarks that are critical to the eventual adoption of quantum computing for practical uses.

The team lists several of these key advances that converged in the study:

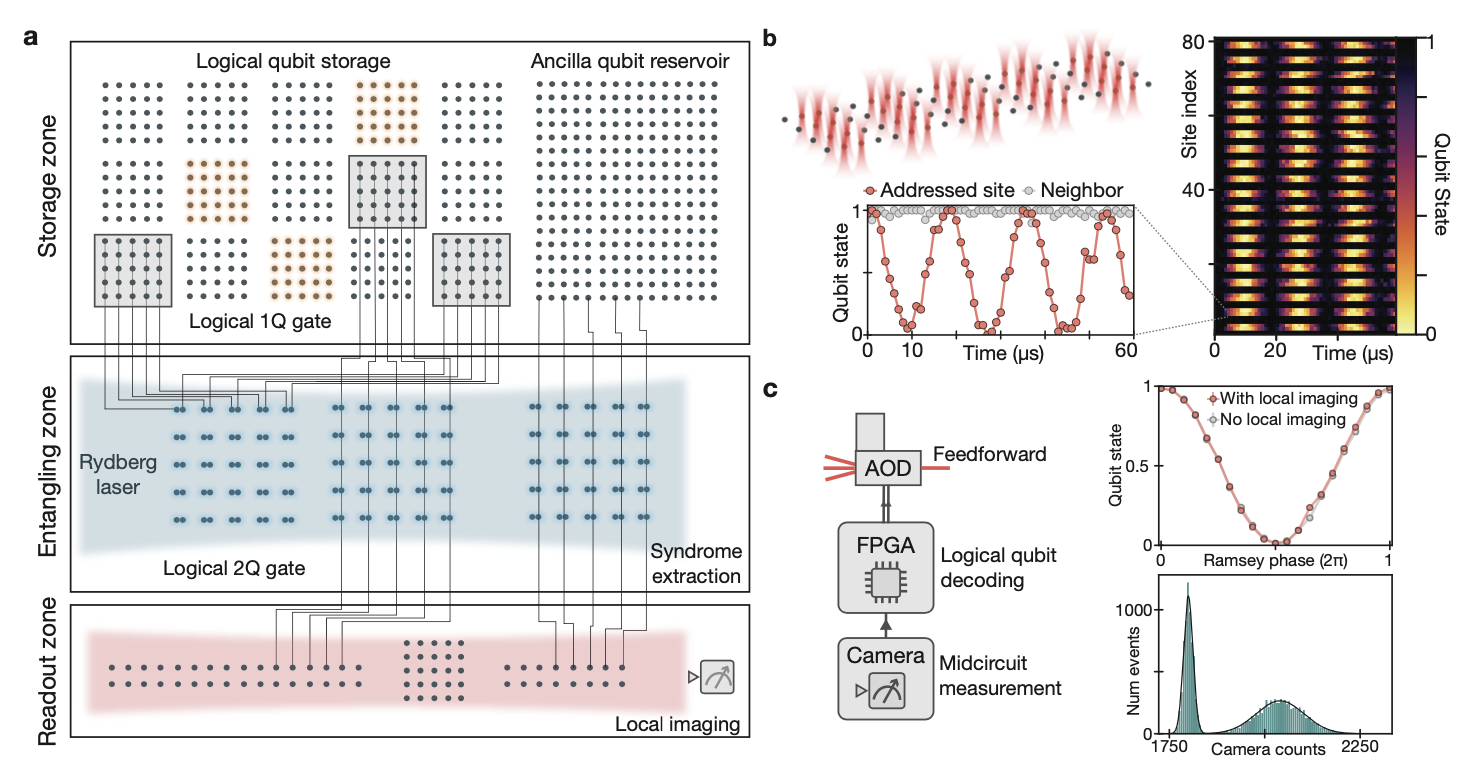

A Surge in Qubit Numbers: The team controlled 280 physical qubits. Given that each logical qubit consists of multiple physical ones, an increased count augments the number of logical qubits.

Enhanced Two-Qubit Gate Fidelity: Quantum computations require high-precision operations on qubits, known as gates. The team reported a fidelity rate of 99.5% for these gates.

Hardware-Efficient Control: The researchers implemented direct, parallel control over groups of logical qubits. Such an approach reduces control overhead, making quantum computing more scalable.

Zoned Architecture: Drawing from classical computing, the team introduced a zoned design. The scientists segmented operations into storage, entangling, and readout zones, ensuring precise and efficient quantum computation.

Mid-Circuit Readout and Feedforward: Building on this design, the team achieved high-fidelity mid-circuit measurements of select qubits, a feature essential for many quantum computation methods, the researchers report.

Next Steps

QuEra’s real mission is to build a quantum computer, based on this research, that can solve practical problems more effectively than current classical technology. Experts differ on just how many of these logical qubits will be needed to bring quantum computing out of the lab and into the real world.

Some scientists estimate it will take thousands of these high-quality qubits to achieve this quantum advantage. On the other hand, some believe that just 100 top-notch qubits would suffice, especially if coupled with other hardware advances, methodological innovations and algorithmic improvements.

The researchers feel confident that by increasing laser power and optimizing control methods the zoned architecture and logical-level control could allow our techniques to be readily scaled to over 10,000 physical qubits. The quantum error correction efficiency can be improved by reducing two-qubit gate errors to 0.1%.

Some other researchers suggest that anything over 50 qubits may be able to beat classical computational efforts. In fact, the Harvard-led team strategically landed on using 48 qubits for the test because it gave them a chance to compare what their quantum system could do with what could be simulated on a regular computer, in order to make sure the quantum results were real and reliable.

The work was supported by the Defense Advanced Research Projects Agency through the Optimization with Noisy Intermediate-Scale Quantum devices (ONISQ) program, the National Science Foundation, and the Army Research Office

To see what QuEra is planning, the company will hold a special event on Jan 9, 2023 at 11:30 AM ET, where QuEra will reveal its commercial roadmap for fault-tolerant quantum computers.

You can register for this online event at https://quera.link/roadmap

If you found this article to be informative, you can explore more current quantum news here, exclusives, interviews, and podcasts.