Quantum computing has captured the imagination of many corporate executives. By promising to solve problems that could not be reasonably solved with classical computers, the right combination of quantum hardware and software can deliver competitive advantage, new revenue streams, cost reductions, and other bottom-line benefits.

Indeed, in a recent survey of 500 professionals performed by market research firm Propeller Research, over 61% of respondents reported that their companies have a budget for quantum computing technologies, with an additional 21% saying that their company does not have a budget, but is planning to add one. The number was particularly high (nearly 80%) in finance-related companies.

As is the case with other frontier technologies, companies are approaching quantum computing in different ways. Some companies have taken a wait-and-see approach, accepting a calculated risk that they might have to play high-speed catch-up in a year or two. In others, interested individuals explored quantum computing as an after-hours skunkworks effort, and then succeeded in convincing their managers to turn their efforts into formal rather than clandestine projects. Yet others have embraced quantum computing from the top, built exploratory teams, and tasked them with building internal quantum competence and identifying relevant use cases. Once identified, these companies selected several use cases for proof-of-concept projects. Now, some of these proof of concepts have been completed successfully, and companies are starting to think about the possible production deployment of quantum solutions.

“In the past year, the quantum computing sector has turned a major corner, transitioning from primarily a lab-based research activity into an increasingly attractive commercial option,” said Bob Sorensen, senior vice president of research and chief analyst for quantum computing at Hyperion Research. “As such, the growing number of potential end users will be looking to leading-edge quantum computing suppliers not only for demonstrated technological prowess but about what quantum computing can do for them. These end users will want to know how they can best integrate quantum computing into their overall R&D, business, and IT infrastructures; how they will be able to attract the right quantum and related subject matter experts to support those activities; how much they will need to budget to stand up and run a robust quantum computing capability; and perhaps most importantly, what significant market and financial advantages quantum computing capability can bring to the table.”

What do IT professionals need to know when considering moving quantum computing into production?

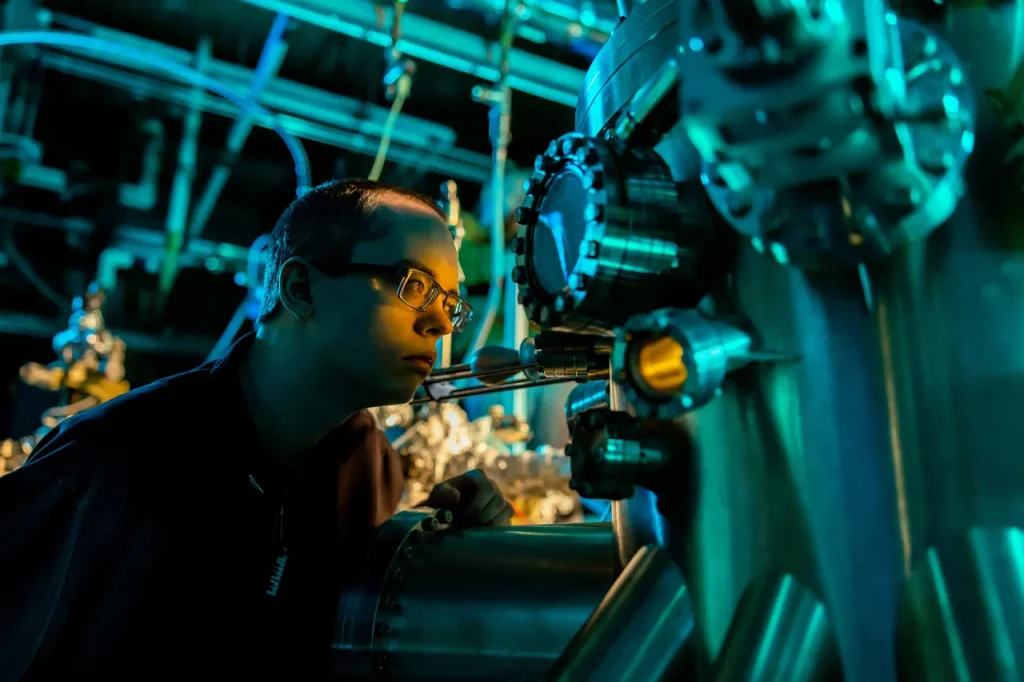

Is the hardware strong enough?

The quantum bit (qubit) is the basic unit of quantum information, loosely analogous to the binary classical bit.

The number of qubits correlates strongly with the capacity and capabilities of quantum machines. More qubits often mean that a larger molecule can be simulated, a more sophisticated optimization problem can be solved, or that a calculation can be performed with higher precision.

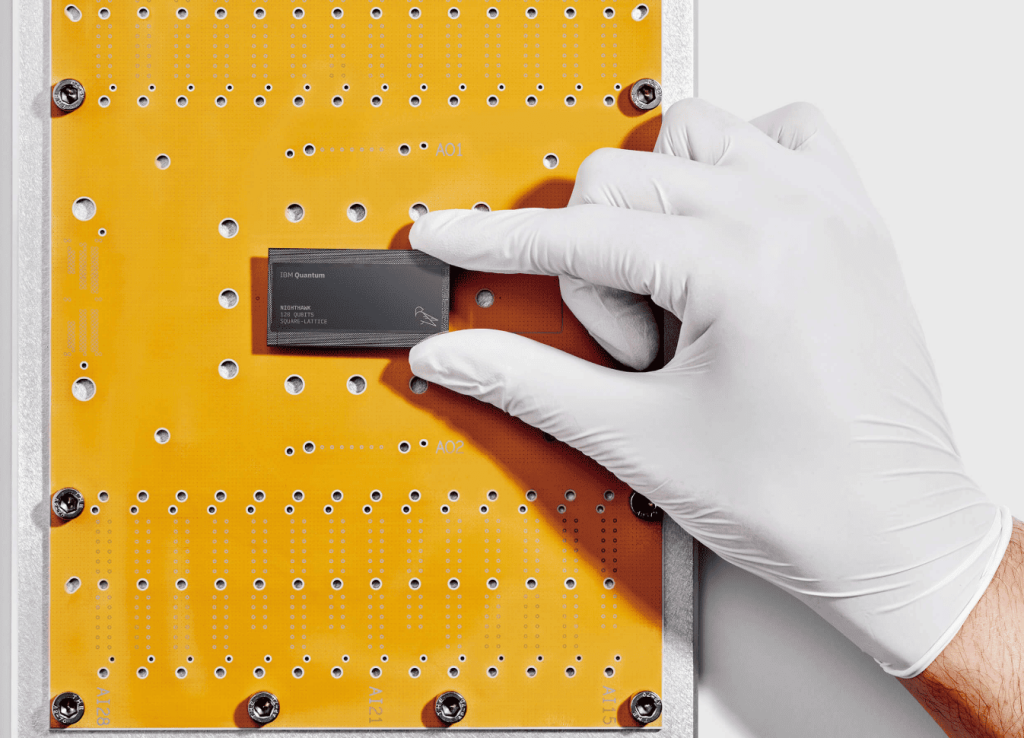

IT professionals should seek to estimate the number of qubits they need. The number of available qubits in each generation of quantum machines grows faster than Moore’s law for classical computers. For instance, IBM announced a hardware roadmap to deliver machines with 127 qubits in 2021, 433 in 2022, and 1121 qubits in 2023. Other vendors, such as Honeywell, are predicting similar growth.

Where is the hardware, anyway?

Given the popularity of cloud services such as AWS and Azure, companies might assume that cloud-based quantum computing is an obvious choice. This view is strengthened by the rapid pace at which quantum computers evolve. If you buy a quantum computer for your data center and it is obsolete in two years, did you make the right choice?

However, cloud-based quantum computing presents availability concerns. How quickly would your quantum job be serviced? Unlike classical clouds where you know that Google, for instance, is in charge of the data center, quantum computers are currently managed on a private data center owned by the hardware manufacturer. Even if you use a public cloud, your job is redirected to a third-party data center that might be as robust as the classical data center that you had been expecting.

What about an SLA?

When outsourcing compute, or often any other function, one would want to define a service level agreement that might include uptime, response time, capacity, and more. These metrics might be also dependent on the physical location of the quantum hardware.

It is fair to expect that quantum SLAs lag significantly behind SLAs of more established computing technologies, and thus IT managers need to consider whether they are sufficiently comfortable with the SLAs that they can get.

Predictable cost

Want to know how much an EC2 instance will cost you? Amazon has a pricing calculator and a way to cap your spending. Want to know how much running a quantum algorithm will cost you? The tools are not so advanced, and there is also significant divergence in how price is calculated: is it based on time? On the number of quantum operations? If quantum computers will replace classical computers for certain types of calculations, such replacement must be cost-effective to compensate for the fact that quantum computers are not as mature.

Scalability

If all goes well and you need to perform more of the same calculations, will the computing bandwidth be available for you? Just as important, if you want to run a more complex calculation, could you upgrade to a larger computer? How difficult would it be to change the quantum code to allow for such increased complexity?

End-to-end workflow

How does one developing, test, optimize, deploy and maintain quantum code? Similar to what you might see with machine learning models, one would expect quantum code to be refined and upgraded over time, incorporating new institutional knowledge or responding to changing conditions. And just like in machine learning applications, optimizations can do wonders towards increasing speed and reducing cost. One must understand the full lifecycle of quantum code.

Portability

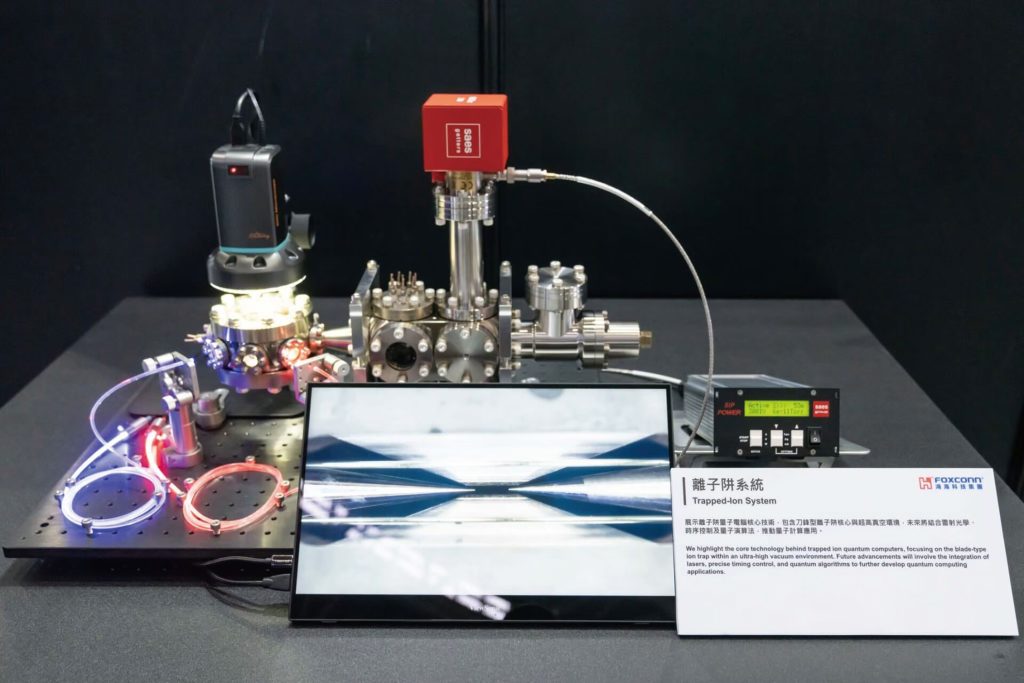

Quantum hardware changes, and there are several competing architectures and modalities for quantum computers — superconducting qubits, cold atoms, trapped ions, quantum annealers, and others. It is unclear which architecture or architectures will prevail in the long run. As such, IT executives must ensure that they can port their quantum applications to other platforms when necessary, or risk being stuck with an obsolete solution.

Componentized or micro-service architecture

You won’t be running a Zoom call on a quantum computer anytime soon, just like you’re likely not using a word processor on a GPU. Each computing modality — a CPU, a GPU, and a QPU (quantum processing unit) — has things that it is particularly good at. One needs to develop an architecture that leverages the relative strengths of each processor — perhaps CPUs for I/O, QPUs for specialized computations, and perhaps GPUs if required for anything else. Development platforms also need to support these hybrid quantum/classical solutions.

Who will support it?

There is a shortage of quantum experts. This is partly because of exploding demand, and partly because many existing tools require PhD-level knowledge in quantum physics. Today, an ideal profile for a quantum engineer is someone with both computer science as well as a quantum physics background, and they are not always easy to find. Will you rely on an external service provider for time-critical support or opt to hire and develop your internal expertise?

Trailblazing

It is said that pioneers are the ones with arrows in their backs. The road to deploying quantum solutions might not be smooth, but we believe the journey might very well be worth it.

If you found this article to be informative, you can explore more current quantum news here, exclusives, interviews, and podcasts.