Editor’s Note: QuEra Computing recently published its roadmap for the next three years. It’s ambitious plan that sees a path toward the implementation of quantum computers that can tackle some real-world problems more effectively than classical computers. The ambition for this plan is fueled by the recent success of a Harvard-led study that was recently published in Nature. In this exclusive, we take a deeper dive into the researchers’ approach to error correction that could create quantum practicality.

Imagine a quantum computer as a subway system, a transportation network that takes its mathematically-minded commuters to their jobs working on incredibly complex calculations.

A qubit, the smallest unit of quantum information, would be the individual passenger that rides this long, fast, complex train of quantum calculations all alone. Importantly, no matter what approach scientists use to build these quantum information networks, the device’s computational potential relies on those qubits, making them as valuable as they are vulnerable. In fact, the slightest environmental noise can cause those quantum information-packing passengers to make errors, potentially leading to a complete computational train wreck.

Most quantum computing approaches try to solve this problem by coupling error-correcting qubits to chaperone and protect what quantum scientists call the logical qubit. In other words, the logical qubit is the unit of information that is protected by a quantum error correction scheme performed by the appropriately named error-correcting qubit.

It’s a method that addresses the effects of errors, but, assigning qubits to error-correction drains away valuable qubits from the total computational power of the system. In current approaches, you could think of those physical qubits as riders driving their own individual cars, but they’re all headed to the same destination for quantum error correction. It may work – but it’s not efficient and doesn’t scale.

For a Harvard-led team of researchers, the solution to this challenge, relying on the natural strengths of the design of the neutral atom quantum computer, was to take a group of logical qubits on a train ride to what they called an “entanglement zone,” where they can perform calculations. Think of this approach as a quantum computational bullet train that brings these all-important qubits to their destination together.

The team, also including QuEra Computing, MIT and NIST/University of Maryland scientists, recently published a study about the approach that many experts suggest could redefine the scalability and control of quantum circuits and take the technology a step out of the current noisy intermediate-scale quantum computing era.

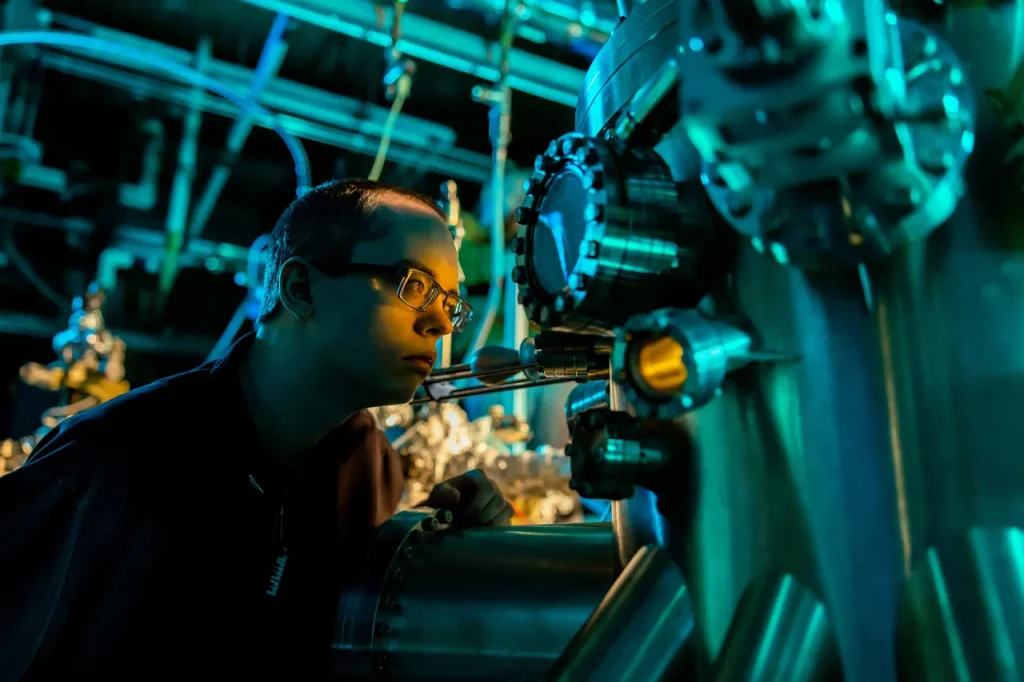

Atom Array

In an interview with The Quantum Insider, Dolev Bluvstein, graduate student at Harvard who spearheaded the investigation, and Harry Zhou, research scientist at Harvard and QuEra Computing and key contributor to the work, offered a deep dive into the method from their recent Nature study that garnered headlines around the world and even earned the praise from Scott Aaronson, the Schlumberger Centennial Chair of Computer Science at The University of Texas at Austin, and director of its Quantum Information Center, who called it “plausibly the top experimental quantum computing advance of 2023.”

According to the researchers, the team created an array of atoms in the neutral atom quantum computer that can be reconfigured as needed to act as logical qubits. The qubits then can interact with each other in any pattern required. The system also allows for both the manipulation of individual qubits and the checking of their states partway through the process.

Further, this zoned architecture approach – along with the versatility of ways to organize the qubits – can enhance the reliability of quantum operations. Specifically, they’ve improved the performance of two-qubit operations – basic interactions between pairs of qubits – by using a method known as the “surface code.” They’ve also managed to create and work with groups of qubits that are robust against certain errors.

The team was able to perform advanced quantum operations, such as creating large entangled states and teleporting entanglement between qubits in a fault-tolerant way.

Their system includes a complex three-dimensional code interconnected in a highly complex fashion similar to higher dimensions – that allows the researchers to entangle up to 48 logical qubits with a high level of connectivity. This capability is used to run simulations and algorithms efficiently, and they showed that using this logical encoding improves the accuracy and performance of quantum computations, even detecting and correcting errors.

The team’s results suggest that we’re moving towards more reliable and large-scale quantum processors that can handle complex computations with fewer errors.

In the study, the researchers used as many as 280 physical qubits, but needed to program fewer than ten control signals to execute the complex calculations.

Transitioning to Error-corrected Devices

Bluvstein said that the work signals a transition point in the field, where the fundamental units in their processor – as well as the classical controls for operating on them – are now at the logical qubit level, as opposed to the physical qubit level. And they say the time is now for switching to error-corrected devices.

“What’s really important is that if we keep making these devices bigger and bigger, we also need the performance to continue improving, in order for us to be able to realize some of these really complex algorithms, where each qubit is needed to be able to implement billions of gates,” said Bluvstein. “To be able to implement these billions of gates, we really need to start, now in the field, switching over to not only testing our algorithms with physical qubits and learning about how quantum algorithms work, but begin testing our algorithms with logical qubits. We need to determine how error-corrected algorithms work because we know that in order to, for example, solve really big problems, such as really big chemistry problems, we need things on the scale of more than a billion gates. And we’re never going to be able to achieve this with our physical qubits, so we need to focus on error-correcting algorithms.”

Avoiding Overhead

According to the researchers, the significance of their approach is that even when increasing the size of the code or adding more codes, they can still efficiently manage more beams of light with classical control systems, thus ensuring scalability without added complexity.

“We can operate on many error-correcting codes, and we can make larger error-correcting codes without any extra overhead in the classical control,” said Bluvstein.

This finding is central to the practicality of quantum circuits and a step toward the realization of large-scale, fault-tolerant quantum computers.

This goes to the heart of the “overhead challenge” in quantum computing, which refers to the extra resources or effort needed to achieve a task. In this context, creating a logical qubit that is error-corrected requires a lot of physical qubits. For example, to create one reliable logical qubit, you might need to use dozens or even hundreds of physical qubits just for error correction.

Parallelism

At the heart of the approach is parallelism. Atomic movement, shuttling and entangling within the processor’s architecture allow for a highly efficient and parallel control system, according to the researchers. By pulsing a global entangling laser whenever qubits are moved next to each other, the team can execute entangling gates and prepare quantum states swiftly and simultaneously. This approach to parallelism is unique to their study and is what enables them to scale up the quantum circuits.

According to Zhou, you might only need to turn on your flat screen television to understand the potential of the neutral atom approach’s proficiency of parallelism compared to other quantum approaches, such as superconducting quantum computing.

“For example, if you look at your television, imagine if you opened up your 4k TV with a million pixels, and realize that there was actually a single separate control wire that leads to every single tiny cell,” said Zhou. “That would not make a very good technology, especially as you’re going to these larger scales. Whereas the parallel control really makes this more like plugging one cable in and you’re ready to go.”

Future

The researchers say their work isn’t over yet – there’s a lot more still to do. However, the team is confident that the techniques, along with other potential innovations, will scale and that, if so, they see a path forward to large-scale, fault tolerant quantum computers.

Next steps might include working on better ways to maintain the integrity of the quantum computing process, said Bluvstein.

“It’s quite daunting – and to be completely frank – it is still quite daunting,” said Bluvstein. “But we have ideas in terms of how to get there and I think we have a really unique avenue with neutral atoms and these control techniques, especially in combination with the exceptional creativity and progress from across the neutral atom community.”

Still, the study reflects an intangible benefit – momentum – that stirs the researchers’ optimism. As momentum grows, they anticipate that more science will lead to more innovation, and as a result, quantum technology will continue to accelerate at an impressive pace.

As Zhou puts it, “If we can build these 10,000 qubit scale devices using all these techniques from across the community, then at that point, I think we will be confident we can build large-scale quantum computers.”

The Harvard-led work is integral to paving the path to practical quantum computing, according to QuEra, which released it’s roadmap this week. For more information on that roadmap please see QuEra Computing Roadmap For Advanced Error-Corrected Quantum Computers, Pioneering The Next Frontier In Quantum Innovation.

For more market insights, check out our latest quantum computing news here.