“If I were not a physicist, I would probably be a musician. I often think in music. I live my daydreams in music. I see my life in terms of music.” ― Albert Einstein.

From the music of the spheres to the jazz of physics, and from Albert Einstein’s hairstyle to Ludwig Van Beethoven coiffure, there has always been — to me, at least — an inexplicable connection between physics and music. Now a team of Cambridge Quantum and the University of Plymouth scientists are reporting that this link may not be a coincidence at all and they are exploring the nexus between music, language, quantum information science and artificial intelligence to see if quantum computers can become quantum composers.

In a paper, available on the pre-print server ArXiv, the scientists are leveraging their groundbreaking work in a type of artificial intelligence called natural language processing — NLP — to demonstrate how quantum computers can be programmed to learn to classify music that conveys different meanings. They add that the work may show how that this ability could mean that a quantum algorithm could be used to compose music.

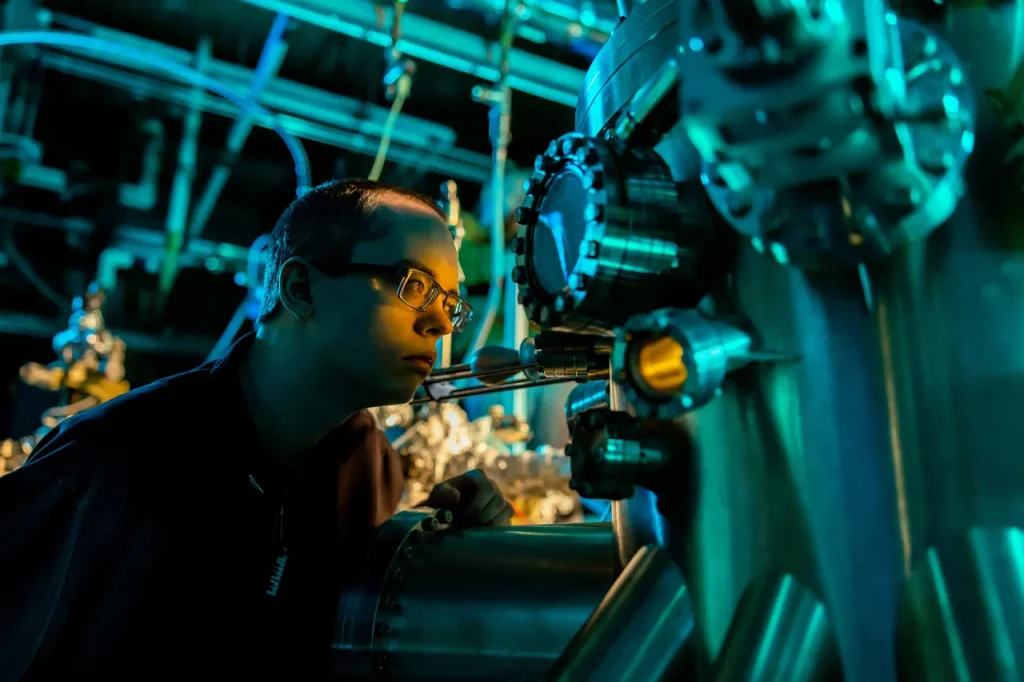

Over the last year, the Cambridge Quantum team reported in a series of studies that quantum computers may be ideally suited to understand language, giving quantum NLP a head start in the push for quantum advantage. Classical NLP is being used in a range of applications right now, such as voice assistants. “Meaning aware” quantum NLP, even in the Noisy Intermedia Scale Quantum (NISQ) era — pushes the possibilities of machines that understand language even farther.

Currently, music recommendation systems, like the ones on Spotify or Amazon Prime, often work by determining correlations by sifting through large amounts of data provided by human users. For their “Quanthoven” system, however, the team took another approach.

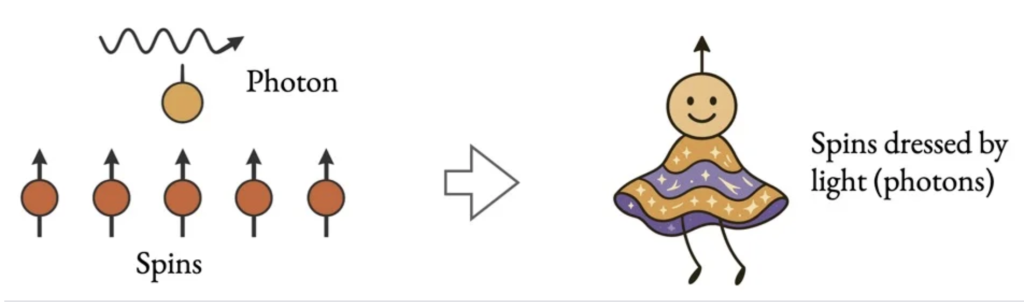

“Following an alternative route, which comes under the umbrella of compositional intelligence, we are taking the first steps in addressing the aforememtioned challenge from a natural language processing perspective, which adopts a structure informed approach,” they write. This structural approach is underpinned by the connection between grammar in language and structure in musical compositions, they added.

The researchers are relying on the Distributional Compositional Categorical — coincidentally, yet aptly named, in this case, DisCoCat — modeling framework for NLP.

“Some might be neither to our ears, others might be both, but the quantum classifier is able to suggest whether a CFG-generated piece is one or the other.”

Music, just like language, might be ideally matched for quantum computers, the researchers suggest.

“Emerging quantum computing technology promises formidable processing power for some tasks,” the researchers write, adding that “..the mathematical tools that are being developed in QNLP research are quantum native by yet another analogy between mathematical structures, that is they enable direct correspondences between language and quantum mechanics, and consequently music.”

The algorithm was able to learn the meaning of “melodic” and “rhythmic.” The context-free grammar, or CFG — which are grammars that are often studied in fields of theoretical computer science, compiler design and linguistics — can also create musical pieces with snippets combined in ways that may render a piece more melodic than rhythmic, or vice-versa.

“Some might be neither to our ears, others might be both, but the quantum classifier is able to suggest whether a CFG-generated piece is one or the other,” the researchers write.

The team included Eduardo Reck Miranda, Richie Yeung, Anna Pearson, Konstantinos

Meichanetzidis and Bob Coecke.

Before telling Tchaikovsky the news, you can check out Ludovico Quanthoven’s four pieces of music yourself here.

You can read more about Cambridge Quantum’s QNLP work here and its open-sourced QNLP toolkit here.

If you found this article to be informative, you can explore more current quantum news here, exclusives, interviews, and podcasts.